A Comprehensive Framework for Assessing Pharmacophore Model Performance in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on evaluating pharmacophore model performance.

A Comprehensive Framework for Assessing Pharmacophore Model Performance in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on evaluating pharmacophore model performance. It covers foundational concepts of pharmacophore modeling, key methodologies and their real-world applications in virtual screening and lead optimization, strategies for troubleshooting common challenges and model refinement, and rigorous statistical validation and comparative analysis techniques. By integrating both traditional and emerging AI-driven approaches, this review establishes a robust framework for assessing model quality, ensuring reliability, and maximizing the impact of pharmacophore models in accelerating drug discovery pipelines.

Understanding the Core Principles of Pharmacophore Modeling

In the field of medicinal chemistry and computer-aided drug design, the pharmacophore concept serves as a fundamental principle for understanding and predicting the biological activity of molecules. A pharmacophore provides an abstract representation of the molecular interactions essential for a ligand to bind to its biological target. The official IUPAC definition characterizes a pharmacophore as "an ensemble of steric and electronic features that is necessary to ensure the optimal supramolecular interactions with a specific biological target structure and to trigger (or block) its biological response" [1] [2]. This definition emphasizes that a pharmacophore is not a specific molecular structure itself, but rather the three-dimensional arrangement of functional features that enable molecular recognition.

This guide explores the evolution of the pharmacophore concept from its historical origins to its modern applications, with a specific focus on objectively comparing the performance of different pharmacophore modeling approaches against other computational methods. For researchers and drug development professionals, understanding these performance characteristics is crucial for selecting appropriate methodologies in virtual screening and lead optimization campaigns. We will examine quantitative performance data, detailed experimental protocols, and emerging trends in pharmacophore-based drug discovery to provide a comprehensive resource for assessing pharmacophore model performance within a broader research context.

Historical Evolution and Key Definitions

The Origins of the Pharmacophore Concept

The conceptual foundation of the pharmacophore dates back to the late 19th and early 20th centuries, despite the fact that the term itself was not used at that time. Paul Ehrlich's pioneering work on chemotherapy and his concept of "magic bullets" established the principle of selective molecular interactions between drugs and their targets [3]. Emil Fisher's "Lock & Key" analogy in 1894 further advanced this understanding by suggesting that a ligand and its receptor fit together like a key in a lock to enable interaction [3]. Historically, the term "pharmacophore" was often used vaguely to denote common structural or functional elements in a set of compounds essential for activity toward a particular biological target [4].

The modern conceptualization of the pharmacophore was significantly advanced by Lemont Kier, who popularized the concept in 1967 and first used the term in a 1971 publication [1]. This development moved the understanding beyond specific functional groups toward a more abstract description of stereoelectronic molecular properties. Interestingly, despite common attributions, neither Paul Ehrlich nor his works mention the term "pharmacophore" or make use of the modern concept [1].

Modern IUPAC Definition and Interpretation

The formal IUPAC definition, established in recent decades, provides precise terminology that distinguishes pharmacophores from related concepts such as "privileged structures" [4]. According to this definition:

- A pharmacophore represents an ensemble of essential steric and electronic features

- These features must ensure optimal supramolecular interactions with a specific biological target

- The features are necessary to trigger or block the biological response

This definition clarifies that pharmacophores do not represent specific functional groups or structural fragments, but rather the abstract spatial arrangement of chemical functionalities that enable binding and activity [4]. This abstraction allows structurally diverse molecules sharing the same pharmacophore to be recognized by the same binding site and exhibit similar biological profiles—a property known as "scaffold hopping" capability [5] [4].

Core Features and Model Development

Fundamental Pharmacophore Features

Pharmacophore models incorporate specific chemical features that mediate ligand-receptor interactions. These typical features include [1] [6] [4]:

- Hydrophobic centroids (H): Represent areas favorable for hydrophobic interactions

- Aromatic rings (AR): Enable π-π stacking and cation-π interactions

- Hydrogen bond acceptors (HBA): Sites capable of accepting hydrogen bonds

- Hydrogen bond donors (HBD): Sites capable of donating hydrogen bonds

- Cations/Positive ionizable groups (PI): Positively charged or ionizable features

- Anions/Negative ionizable groups (NI): Negatively charged or ionizable features

These features are typically represented as geometric entities like spheres, vectors, or planes in three-dimensional space, with each feature type capable of establishing specific non-bonding interactions with complementary features in the biological target [4]. A well-defined pharmacophore model often includes both hydrophobic volumes and hydrogen bond vectors to comprehensively represent the interaction landscape [1].

Pharmacophore Model Development Workflow

The process for developing a pharmacophore model follows a systematic approach [1]:

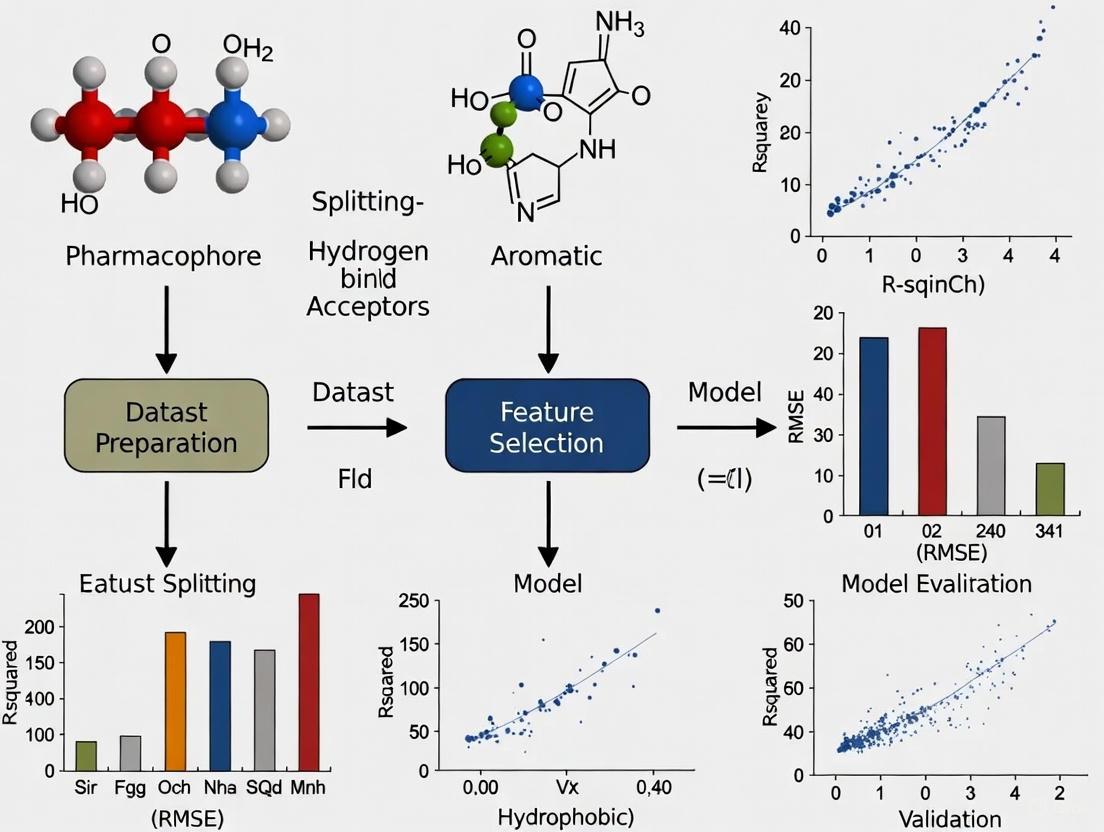

Figure 1: The systematic workflow for developing pharmacophore models, highlighting the iterative nature of model validation and refinement.

Select a training set of ligands: Choose a structurally diverse set of molecules, including both active and inactive compounds, to ensure the model can discriminate between molecules with and without bioactivity [1].

Conformational analysis: Generate a set of low-energy conformations for each molecule that likely contains the bioactive conformation [1].

Molecular superimposition: Superimpose all combinations of the low-energy conformations of the molecules, fitting similar functional groups common to all molecules in the set. The set of conformations that results in the best fit is presumed to be the active conformation [1].

Abstraction: Transform the superimposed molecules into an abstract representation where specific functional groups (e.g., phenyl rings) are designated as conceptual pharmacophore elements (e.g., 'aromatic ring') [1].

Validation: Test the pharmacophore model hypothesis by assessing its ability to account for differences in biological activity across a range of molecules. As new biological data becomes available, the model can be updated and refined [1].

Performance Comparison: Pharmacophore-Based vs. Docking-Based Virtual Screening

Virtual screening has become an indispensable tool in modern drug discovery pipelines. The two primary computational approaches for virtual screening are pharmacophore-based virtual screening (PBVS) and docking-based virtual screening (DBVS). A comprehensive benchmark study compared these methods across eight structurally diverse protein targets, providing valuable performance data for researchers selecting screening methodologies [7].

Table 1: Performance comparison between pharmacophore-based virtual screening (PBVS) and docking-based virtual screening (DBVS) across eight protein targets

| Target | Method | Enrichment Factor | Hit Rate at 2% | Hit Rate at 5% |

|---|---|---|---|---|

| ACE | PBVS | Higher in 14/16 cases | Much higher | Much higher |

| AChE | PBVS | Higher in 14/16 cases | Much higher | Much higher |

| AR | PBVS | Higher in 14/16 cases | Much higher | Much higher |

| DacA | PBVS | Higher in 14/16 cases | Much higher | Much higher |

| DHFR | PBVS | Higher in 14/16 cases | Much higher | Much higher |

| ERα | PBVS | Higher in 14/16 cases | Much higher | Much higher |

| HIV-pr | PBVS | Higher in 14/16 cases | Much higher | Much higher |

| TK | PBVS | Higher in 14/16 cases | Much higher | Much higher |

| Average | PBVS | Superior | Much higher | Much higher |

| Average | DBVS | Lower | Lower | Lower |

The study revealed that PBVS consistently outperformed DBVS across most targets and metrics. Of the sixteen sets of virtual screens (one target versus two testing databases), the enrichment factors of fourteen cases using the PBVS method were higher than those using DBVS methods [7]. The average hit rates over the eight targets at 2% and 5% of the highest ranks of the entire databases for PBVS were substantially higher than those for DBVS [7]. This performance advantage positions PBVS as a powerful method for retrieving active compounds from chemical databases in drug discovery campaigns.

Case Study: CDK-2 Inhibitors Screening

A separate study focusing on CDK-2 inhibitors provides additional performance comparisons, specifically evaluating molecular dynamics-derived pharmacophore models against docking approaches [8].

Table 2: Performance comparison of different virtual screening methods for CDK-2 inhibitors

| Method | Approach | ROC₅% Value | Performance Notes |

|---|---|---|---|

| MYSHAPE | MD-pharmacophore | 0.99 | Best performance when multiple target-ligand complexes are available |

| CHA | MD-pharmacophore | 0.98-0.99 | Improved performance with MD trajectories |

| Docking | DBVS | 0.89-0.94 | Standard docking performance |

| Glide | DBVS | 0.89-0.94 | Semi-flexible constrained/unconstrained docking |

The results demonstrated that the use of molecular dynamics (MD) trajectories significantly improved screening performance. The MYSHAPE approach achieved exceptional performance (ROC₅% = 0.99) when multiple target-ligand complexes were available, while the Common Hit Approach (CHA) also showed sharp improvement over single-complex methods [8]. Both MD-derived pharmacophore methods outperformed traditional docking approaches (ROC₅% = 0.89-0.94), indicating their superior suitability for prospective screening and identification of novel CDK-2 inhibitors [8].

Experimental Protocols and Methodologies

Benchmark Comparison Protocol

The comprehensive benchmark study comparing PBVS and DBVS followed a rigorous experimental protocol [7]:

Target Selection: Eight pharmaceutically relevant targets representing diverse pharmacological functions and disease areas were selected: angiotensin-converting enzyme (ACE), acetylcholinesterase (AChE), androgen receptor (AR), D-alanyl-D-alanine carboxypeptidase (DacA), dihydrofolate reductase (DHFR), estrogen receptors α (ERα), HIV-1 protease (HIV-pr), and thymidine kinase (TK).

Data Set Preparation: For each target, an active dataset containing experimentally validated active compounds was constructed. Two decoy datasets (Decoy I and Decoy II) composed of approximately 1000 compounds each were generated.

Pharmacophore Model Construction: Each pharmacophore model was constructed based on several X-ray crystal structures of the target protein in complex with ligands using LigandScout software.

Virtual Screening Execution: Each molecular database was searched using both pharmacophore-based (Catalyst software) and docking-based (DOCK, GOLD, and Glide programs) virtual screening approaches against the corresponding model.

Performance Evaluation: Virtual screening effectiveness was evaluated by measuring enrichment factors and hit rates at different percentage thresholds of the ranked databases.

Molecular Dynamics-Derived Pharmacophore Protocol

The protocol for developing molecular dynamics-derived pharmacophore models for CDK-2 inhibitors involved [8]:

Structure Preparation: Selection of 149 CDK-2/inhibitor complexes from the Protein Data Bank, followed by protein preparation and optimization.

Molecular Dynamics Simulations: Running MD simulations for each complex using appropriate force field parameters and simulation conditions.

Trajectory Conversion: Processing MD trajectory output files using VMD software, desolvating complexes, and eliminating ions to focus on ligand-protein interactions.

Pharmacophore Generation: Converting MD complexes to pharmacophore models using LigandScout 4.2.1, generating feature vectors for each model.

Model Aggregation: Applying CHA and MYSHAPE approaches to aggregate distinct pharmacophore feature vectors and identify the most relevant interaction patterns.

Virtual Screening Performance Assessment: Evaluating models using receiver operating characteristic (ROC) curve analysis at early enrichment stages (ROC₅%).

Emerging Trends and Advanced Applications

Pharmacophore-Informed Generative Models

Recent advances have integrated pharmacophore concepts with deep generative models for de novo molecular design. TransPharmer represents one such approach that combines ligand-based interpretable pharmacophore fingerprints with a generative pre-training transformer (GPT)-based framework [5]. This integration enables the generation of structurally novel compounds that maintain essential pharmacophoric constraints, demonstrating significant potential for scaffold hopping in drug discovery.

In validation studies, TransPharmer demonstrated exceptional performance in generating bioactive ligands. In a case study targeting polo-like kinase 1 (PLK1), three out of four synthesized compounds showed submicromolar activities, with the most potent compound (IIP0943) exhibiting a potency of 5.1 nM [5]. Notably, IIP0943 featured a new 4-(benzo[b]thiophen-7-yloxy)pyrimidine scaffold distinct from known PLK1 inhibitors, demonstrating the scaffold-hopping capability of pharmacophore-informed generative models [5].

Another approach, Pharmacophore-Guided deep learning approach for bioactive Molecule Generation (PGMG), uses pharmacophore hypotheses as a bridge to connect different types of activity data [9]. PGMG employs a complete graph to represent pharmacophores, with each node corresponding to a pharmacophore feature, enabling the spatial information to be encoded as distances between node pairs [9]. This method has demonstrated flexibility in utilizing different activity data types in a uniform representation to control the molecule design process.

Diffusion Models for Pharmacophore Generation

PharmacoForge represents a cutting-edge approach that employs diffusion models for generating 3D pharmacophores conditioned on a protein pocket [10]. This method addresses limitations in both virtual screening and de novo design by leveraging generative modeling to design pharmacophores for given protein pockets. The generated pharmacophore queries identify ligands that are guaranteed to be valid, commercially available molecules, overcoming the synthetic accessibility challenges often faced by de novo generation methods [10].

In evaluation studies, PharmacoForge surpassed other pharmacophore generation methods in the LIT-PCBA benchmark, and resulting ligands from pharmacophore queries performed similarly to de novo generated ligands when docking to DUD-E targets while having lower strain energies [10]. This approach demonstrates the potential of modern generative artificial intelligence techniques to enhance traditional pharmacophore methods.

Essential Research Reagents and Tools

Table 3: Key software tools and computational resources for pharmacophore modeling and virtual screening

| Tool Name | Type | Primary Function | Application Context |

|---|---|---|---|

| LigandScout | Software | Structure-based & ligand-based pharmacophore modeling | Feature identification from protein-ligand complexes [7] [8] |

| Catalyst/HipHop | Software | Pharmacophore-based virtual screening | Database screening and molecule selection [7] |

| Pharmit | Software | Pharmacophore search and virtual screening | Rapid screening of molecular databases [10] |

| RDKit | Cheminformatics | Chemical feature identification and pharmacophore fingerprint calculation | Open-source cheminformatics toolkit [5] [9] |

| DOCK, GOLD, Glide | Docking Software | Docking-based virtual screening | Comparative performance studies [7] |

| TransPharmer | Generative Model | Pharmacophore-informed molecule generation | De novo molecular design with pharmacophoric constraints [5] |

| PGMG | Generative Model | Pharmacophore-guided deep learning for molecule generation | Bioactive molecule generation from pharmacophore hypotheses [9] |

| PharmacoForge | Generative Model | Diffusion-based pharmacophore generation | 3D pharmacophore generation conditioned on protein pockets [10] |

The evolution of the pharmacophore concept from its historical origins to the precise IUPAC definition reflects its fundamental importance in drug discovery. Performance comparisons consistently demonstrate that pharmacophore-based virtual screening methods frequently outperform docking-based approaches in enrichment factors and hit rates across diverse protein targets. The integration of molecular dynamics simulations further enhances pharmacophore model quality and screening performance.

Emerging trends in pharmacophore-informed generative models and diffusion-based approaches represent the next frontier in computational drug discovery, combining the interpretability and scaffold-hopping capability of traditional pharmacophore methods with the novelty and creativity of modern artificial intelligence techniques. As these methodologies continue to evolve, pharmacophore-based approaches will remain essential tools for researchers and drug development professionals seeking to efficiently navigate complex chemical spaces and identify novel bioactive compounds.

In rational drug discovery, a pharmacophore is defined as the ensemble of steric and electronic features that are necessary to ensure optimal supramolecular interactions with a specific biological target and to trigger (or block) its biological response [11] [12]. This abstract representation captures the essential, three-dimensional arrangement of molecular interaction capacities shared by active ligands, focusing on key features rather than specific chemical scaffolds [11]. The core features consistently identified as critical for molecular recognition include hydrogen bond donors and acceptors, hydrophobic regions, and positive and negative ionizable groups [13] [12]. These features facilitate fundamental interactions such as electrostatic attractions, hydrogen bonding, van der Waals forces, and hydrophobic contacts that drive binding affinity and specificity [12]. This guide provides a comparative analysis of these essential pharmacophoric features, detailing their performance characteristics, experimental validation methodologies, and applications in modern drug discovery pipelines.

Table 1: Core Pharmacophoric Features and Their Characteristics

| Feature Type | Atomic/Groups Involved | Primary Interaction Type | Spatial Representation | Tolerance Parameters |

|---|---|---|---|---|

| Hydrogen Bond Acceptor | Oxygen, Nitrogen (with lone pairs) in carbonyls, ethers | Electrostatic, Hydrogen bonding | Vector (cone for sp²) | Distance: ~2.5–3.0 Å; Angle: ~50° (sp²) [14] |

| Hydrogen Bond Donor | N-H, O-H groups | Electrostatic, Hydrogen bonding | Vector (torus for sp³) | Distance: ~2.5–3.0 Å; Angle: ~34° (sp³) [14] |

| Hydrophobic Area | Alkyl chains, aromatic rings, aliphatic carbons | van der Waals, Lipophilic | Spherical centroid/Volume | Sphere radius: ~4–6 Å [12] |

| Positive Ionizable | Protonated amines (pKa 7-10) | Ionic, Salt bridge | Point charge | pKa-based tolerance at pH 7.4 [12] |

| Negative Ionizable | Carboxylates, phosphates (pKa 3-5) | Ionic, Salt bridge | Point charge | pKa-based tolerance at pH 7.4 [12] |

Methodologies for Pharmacophore Model Development

Comparative Workflows: Ligand-Based vs. Structure-Based Approaches

The development of robust pharmacophore models primarily follows two distinct computational workflows, each with specific protocols and applications. The choice between these approaches depends largely on the availability of structural information for the biological target.

Ligand-based pharmacophore modeling relies exclusively on a set of known active compounds to derive common chemical features and their spatial arrangement when no target structure is available [13] [14]. The protocol begins with conformational analysis of active ligands to generate multiple 3D conformers and identify bioactive conformations using techniques like systematic search, Monte Carlo sampling, or molecular dynamics simulations [13]. Subsequent molecular alignment superimposes these conformers to identify shared pharmacophoric features through common feature alignment or flexible alignment algorithms [13]. Finally, feature identification algorithms detect key pharmacophoric features, with statistical methods like principal component analysis used to select the most discriminating features for model building [13].

Structure-based pharmacophore modeling utilizes the 3D structure of the target protein, typically obtained from X-ray crystallography, NMR, or homology modeling [13] [14]. This approach involves analyzing the binding site to identify key interaction points and generate complementary pharmacophoric features [13]. The process typically employs molecular docking of known actives or fragment-like molecules into the binding pocket, followed by analysis of protein-ligand interactions to define critical pharmacophore features [15] [14]. Advanced implementations may incorporate molecular dynamics simulations to account for protein flexibility and induced-fit effects, leading to more dynamic and robust pharmacophore models [14].

Research Reagent Solutions for Pharmacophore Modeling

Table 2: Essential Research Tools for Pharmacophore Modeling

| Tool Category | Specific Software/Resource | Primary Function | Application Context |

|---|---|---|---|

| Commercial Modeling Suites | Discovery Studio [11] [15], MOE [11], LigandScout [11] | Comprehensive pharmacophore modeling, virtual screening | Structure-based & ligand-based design |

| Open-Source Tools | Pharmit [15] [10], Pharmer [10] [16] | Pharmacophore-based virtual screening | High-throughput compound screening |

| Generative AI Models | TransPharmer [5], PharmacoForge [10] [16], PGMG [5] [17] | De novo molecular generation using pharmacophore constraints | Scaffold hopping, novel ligand design |

| Structural Databases | Protein Data Bank (PDB) [11], ZINC [5] [18], BindingDB [18] [15] | Source of protein structures and compound libraries | Template identification, virtual screening |

| Simulation & Analysis | GROMACS [14], AMBER [14], GOLD [15] | Molecular dynamics, docking, conformational analysis | Bioactive pose prediction, model validation |

Performance Comparison of Pharmacophore Modeling Approaches

Quantitative Assessment of Modeling Techniques

Recent advances in computational methodologies have enabled rigorous performance benchmarking of different pharmacophore modeling approaches. The integration of artificial intelligence and machine learning has particularly transformed the efficiency and predictive power of pharmacophore-based screening.

Table 3: Performance Metrics of Pharmacophore Modeling Approaches

| Modeling Approach | Enrichment Factor | Scaffold Hopping Efficiency | Computational Speed | Key Limitations |

|---|---|---|---|---|

| Traditional Ligand-Based | 15-30× [15] | Moderate | Fast to Moderate | Limited to known chemotypes, requires multiple active ligands |

| Traditional Structure-Based | 20-40× [15] | High | Moderate | Dependent on quality of protein structure, less accurate with homology models |

| AI-Enhanced Generative (TransPharmer) | N/A | High (Structurally novel compounds with 5.1 nM potency) [5] | Fast generation, slower training | Requires extensive training data, complex implementation |

| Ensemble Pharmacophore (dyphAI) | Identified 18 novel AChE inhibitors with binding energies -62 to -115 kJ/mol [18] | High (Novel chemotypes with IC₅₀ ≤ control) [18] | Resource-intensive | Computationally demanding for large datasets |

| Diffusion Models (PharmacoForge) | Surpasses other methods on LIT-PCBA benchmark [10] [16] | High (Valid, commercially available molecules) [10] | Fast screening, moderate generation | Limited by training data diversity |

Experimental Validation Protocols

Validation is a critical step in pharmacophore model development to assess quality, robustness, and predictive power [13]. Internal validation evaluates the model's ability to correctly classify training set compounds using techniques like leave-one-out cross-validation and bootstrapping, with statistical metrics including enrichment factor, ROC curves, and AUC values [13]. External validation assesses predictive power using an independent test set of compounds not used in model development, containing both active and inactive compounds to evaluate true positive and true negative identification rates [13].

For experimental confirmation, top-ranking virtual hits identified through pharmacophore screening are subjected to in vitro bioactivity testing. For example, in the dyphAI study targeting acetylcholinesterase inhibitors, nine computationally identified molecules were acquired and tested for inhibitory activity against human AChE, with results showing IC₅₀ values lower than or equal to the control (galantamine) for several compounds [18]. Similarly, TransPharmer-generated PLK1 inhibitors were synthesized and tested, demonstrating submicromolar to nanomolar activities (5.1 nM for the most potent compound IIP0943) [5].

Advanced Applications and Case Studies

Integrative Workflows in Drug Discovery

Modern pharmacophore applications increasingly combine multiple computational techniques into integrated workflows that enhance screening efficiency and success rates. The following diagram illustrates a comprehensive structure-based pharmacophore workflow for target identification and inhibitor development.

Case studies demonstrate the successful application of these integrated workflows. In Alzheimer's disease research, the dyphAI protocol identified 18 novel AChE inhibitors from the ZINC database, with experimental testing confirming that multiple compounds exhibited IC₅₀ values lower than or equal to the control drug galantamine [18]. In diabetes research, pharmacophore modeling targeting α-glucosidase achieved an enrichment factor of 50.6 during virtual screening, leading to the design of a novel glycosyl-based scaffold with superior binding compared to acarbose [15]. In oncology, the TransPharmer generative model produced novel PLK1 inhibitors featuring a new 4-(benzo[b]thiophen-7-yloxy)pyrimidine scaffold, with the most potent compound (IIP0943) demonstrating 5.1 nM potency, high selectivity, and submicromolar activity in cell proliferation assays [5].

Emerging AI and Machine Learning Approaches

Artificial intelligence has revolutionized pharmacophore modeling through several innovative architectures. TransPharmer integrates ligand-based interpretable pharmacophore fingerprints with a generative pre-training transformer framework for de novo molecule generation, excelling in scaffold elaboration under pharmacophoric constraints and demonstrating unique capabilities for scaffold hopping [5]. PharmacoForge implements a diffusion model for generating 3D pharmacophores conditioned on protein pockets, producing queries that identify valid, commercially available molecules while achieving superior performance on the LIT-PCBA benchmark compared to other automated methods [10] [16]. Reinforcement learning approaches like PharmRL optimize pharmacophore feature selection through deep-Q learning algorithms, though they face challenges with generalization and require target-specific training [10] [16].

These AI-enhanced methods address fundamental limitations of traditional pharmacophore modeling, particularly in handling conformational flexibility, protein dynamics, and achieving optimal balance between model specificity and sensitivity [13] [5]. By leveraging large-scale chemical and biological data, they enable more efficient exploration of chemical space while maintaining pharmacophoric patterns essential for biological activity.

Comparing Ligand-Based vs. Structure-Based Pharmacophore Modeling Approaches

Pharmacophore modeling holds an irreplaceable position in modern drug discovery, serving as a cornerstone for virtual screening and lead compound optimization [19] [20]. A pharmacophore model represents an abstraction of essential chemical interaction patterns—a set of chemical features with specific three-dimensional arrangements responsible for biological activity against a particular molecular target [19]. These features typically include hydrogen bond acceptors (HBA), hydrogen bond donors (HBD), hydrophobic (HY) regions, positively or negatively charged groups, aromatic rings (Ar), and exclusion volumes representing steric constraints [19] [20].

The spatial and physicochemical restrictions imposed by binding sites dictate ligand binding modes, allowing structurally diverse molecules to interact with the same bioreceptor through shared pharmacophore patterns [19]. Two distinct computational approaches have emerged for developing these models: ligand-based and structure-based pharmacophore modeling [19] [21]. The fundamental distinction lies in their source information—ligand-based methods rely on the structural characteristics of known active compounds, while structure-based approaches derive features directly from the three-dimensional structure of the target protein, often complexed with a ligand [19].

This guide provides a comprehensive comparison of these complementary methodologies, examining their underlying principles, performance characteristics, experimental workflows, and applications in contemporary drug discovery, with a special focus on their integration in the artificial intelligence era [22].

Core Principles and Theoretical Foundations

Ligand-Based Pharmacophore Modeling

Ligand-based pharmacophore modeling operates on the principle that compounds sharing similar biological activities against a common molecular target likely possess conserved chemical features essential for molecular recognition [19] [21]. This approach extracts the three-dimensional chemical patterns common to a set of active compounds without requiring structural information about the target protein itself [19].

The methodology employs 3D structural alignment of active compounds to identify shared functional groups and their spatial arrangements [19]. Through this process, the algorithm discriminates between features crucial for biological activity and those incidental to it. The resulting model represents the essential chemical framework responsible for the observed pharmacological effect [21].

A significant strength of this approach is its applicability to targets with unknown or difficult-to-resolve three-dimensional structures [21]. However, its effectiveness depends heavily on the quality, diversity, and structural coverage of the known active compounds used for model generation [19].

Structure-Based Pharmacophore Modeling

Structure-based pharmacophore modeling directly translates structural information from protein-ligand complexes into pharmacophore features [19]. This method analyzes intermolecular interactions—such as hydrogen bonds, hydrophobic contacts, ionic interactions, and metal coordinations—between a ligand and its target binding site [23] [24].

The approach requires experimentally elucidated structures from techniques like X-ray crystallography, nuclear magnetic resonance (NMR) spectroscopy, or cryo-electron microscopy (cryo-EM) [21]. Recent advances also permit using computationally predicted structures from tools like AlphaFold2, though with potential limitations in precision for binding site characterization [22].

Structure-based models explicitly capture complementarity principles between ligand and receptor, often including exclusion volumes representing regions occupied by protein atoms where ligand atoms cannot penetrate [19] [20]. This method can generate effective models even from a single protein-ligand complex, making it particularly valuable for novel targets with limited known active compounds [23].

Comparative Analysis: Key Differences and Performance Metrics

Fundamental Distinctions

Table 1: Core methodological differences between ligand-based and structure-based pharmacophore modeling

| Aspect | Ligand-Based Approach | Structure-Based Approach |

|---|---|---|

| Data Source | 3D structures of known active ligands [19] | 3D structure of target protein (often complexed with ligand) [19] |

| Target Structure Requirement | Not required [21] | Essential (from X-ray, NMR, Cryo-EM, or prediction) [21] |

| Information Captured | Common chemical features of active ligands [19] | Complementary interaction features from binding site [19] |

| Exclusion Volumes | Not typically included | Can be incorporated to represent protein steric constraints [20] |

| Suitable Scenarios | Targets with unknown structure; numerous known actives [21] | Targets with known structure; limited known active compounds [19] |

| Chemical Novelty | May limit structural diversity due to similarity constraints [19] | Can identify structurally novel scaffolds through interaction matching [20] |

Performance Characteristics and Validation Metrics

Table 2: Performance assessment and validation metrics for pharmacophore models

| Performance Aspect | Ligand-Based Approach | Structure-Based Approach |

|---|---|---|

| Validation Method | Screening against known active/inactive compounds [19] | Screening against known active/inactive compounds [23] |

| Key Metrics | Sensitivity, Specificity, Yield of Actives (Recall), Enrichment Factor, Goodness of Hit (GH) [23] | Sensitivity, Specificity, Yield of Actives (Recall), Enrichment Factor, Goodness of Hit (GH) [23] |

| Sensitivity | Ability to identify true positives from active compound set [23] | Ability to identify true positives from active compound set [23] |

| Specificity | Ability to reject false positives (decoys) [23] | Ability to reject false positives (decoys) [23] |

| Enrichment Factor (EF) | Measure of how much better than random the model performs [23] | Measure of how much better than random the model performs [23] |

| Model Flexibility | Can be tuned for more restrictive (higher specificity) or permissive (higher sensitivity) screening [19] | Features directly constrained by binding site geometry [19] |

| Scoring Functions | RMSD-based or overlay-based scoring for fitness assessment [19] | RMSD-based or overlay-based scoring for fitness assessment [19] |

In virtual screening applications, the choice between restrictive versus permissive pharmacophore models involves important trade-offs. Highly restrictive models tend to select compounds with better predicted activities but may reduce structural diversity, while less restrictive models can retrieve more hits but with an increased risk of false positives [19].

Experimental Protocols and Workflows

Ligand-Based Pharmacophore Modeling Workflow

Figure 1: Ligand-based pharmacophore modeling and virtual screening workflow [19]

The ligand-based protocol begins with curating a set of experimentally validated active compounds with diverse chemical structures [19] [25]. For example, a study targeting fluoroquinolone antibiotics used four antibiotics—Ciprofloxacin, Delafloxacin, Levofloxacin, and Ofloxacin—to develop a shared feature pharmacophore map [25].

The subsequent steps involve:

- 3D Conformation Generation: Generating representative 3D conformations for each compound, accounting for molecular flexibility [19].

- Structural Alignment: Aligning the 3D structures to identify spatially conserved features [19]. Programs like Molecular Operating Environment (MOE) or open-source tools like Pharmer and Align-it implement various algorithms for this purpose [19].

- Feature Identification: Determining conserved chemical features (hydrogen bond donors/acceptors, hydrophobic areas, aromatic rings, charged groups) critical for activity [19] [25].

- Model Generation and Validation: Creating the pharmacophore hypothesis and validating it using a testing dataset containing both active compounds and decoys [19]. Validation employs statistical metrics including sensitivity, specificity, enrichment factor, and goodness of hit (GH) scores [23].

Structure-Based Pharmacophore Modeling Workflow

Figure 2: Structure-based pharmacophore modeling and virtual screening workflow [23] [24]

The structure-based approach employs this detailed methodology:

- Protein Structure Preparation: Obtaining and preparing a high-quality protein-ligand complex structure. For example, in identifying novel FAK1 inhibitors, researchers used the FAK1–P4N complex (PDB ID: 6YOJ) with missing residues modeled using MODELLER software [23].

- Interaction Analysis: Analyzing specific interactions between the ligand and binding site residues. Tools like Pharmit or LigandScout automatically detect hydrogen bonds, hydrophobic interactions, ionic interactions, and metal coordinations [19] [23].

- Feature Selection and Model Generation: Translating key interactions into pharmacophore features and incorporating exclusion volumes to represent steric constraints [20] [23].

- Model Validation: Rigorously validating the model before virtual screening. For FAK1 inhibitors, researchers used 114 active compounds and 571 decoys from the DUD-E database, calculating sensitivity, specificity, enrichment factor, and goodness of hit scores to select the optimal model [23].

Combined and AI-Enhanced Approaches

Contemporary research increasingly leverages hybrid strategies that integrate both ligand-based and structure-based methods, often enhanced with artificial intelligence [22]. These integrated workflows can implement:

- Sequential Combination: Applying LBVS and SBVS in consecutive steps to progressively filter compound libraries [22].

- Hybrid Combination: Integrating both approaches into a unified framework that leverages their synergistic effects [22].

- Parallel Combination: Running LBVS and SBVS independently and fusing results using data fusion algorithms [22].

AI techniques are revolutionizing both approaches. Deep learning frameworks like DiffPhore demonstrate how knowledge-guided diffusion models can achieve state-of-the-art performance in 3D ligand-pharmacophore mapping, surpassing traditional methods in predicting binding conformations [20]. Similarly, CMD-GEN combines coarse-grained pharmacophore sampling with generative models to optimize molecular stability, drug-likeness, and binding interactions [26].

Case Studies and Experimental Data

Ligand-Based Success: Identifying TGR5 Agonists

A study aimed at discovering novel TGR5 agonists successfully employed ligand-based pharmacophore modeling combined with molecular docking [27]. Researchers generated common feature pharmacophore models using known active compounds and performed virtual screening of large compound libraries. Through this approach, they identified 20 compounds with significant TGR5 agonistic activity at 40 μM concentration. Two compounds—V12 and V14—displayed particularly promising activity with EC₅₀ values of 19.5 μM and 7.7 μM, respectively, representing potential starting points for developing novel TGR5 agonists [27].

Structure-Based Achievement: Discovering Novel FAK1 Inhibitors

In cancer drug discovery, researchers applied structure-based pharmacophore modeling to identify novel FAK1 inhibitors [23]. Using the FAK1-P4N complex (PDB ID: 6YOJ), they developed and validated a pharmacophore model that identified critical interactions in the FAK1 binding pocket. After virtual screening the ZINC database and applying ADMET filtering, they identified four promising candidates. Molecular dynamics simulations and MM/PBSA binding free energy calculations confirmed that compound ZINC23845603 showed strong binding and interaction features similar to the known ligand P4N, making it a promising candidate for further development [23].

Antimicrobial Discovery: Hybrid Approach for Fluoroquinolone Alternatives

A hybrid approach addressed antibiotic resistance by developing a shared feature pharmacophore model from four fluoroquinolone antibiotics [25]. The researchers generated a drug library of 160,000 compounds from ZINCPharmer based on hydrophobic areas, hydrogen bond acceptors, hydrogen bond donors, and aromatic moieties. Virtual screening identified 25 hit compounds with fit scores ranging from 97.85 to 116 and RMSD values from 0.28 to 0.63. Molecular docking against the DNA gyrase subunit A protein (PDB ID: 4DDQ) identified five top compounds with docking scores ranging from -7.3 to -7.4 kcal/mol (compared to -7.3 kcal/mol for ciprofloxacin control). After evaluating drug-likeness using Lipinski's rule, ZINC26740199 emerged as the most promising lead compound [25].

Table 3: Key software tools and resources for pharmacophore modeling

| Tool Name | Approach | Access | Key Features | Application Example |

|---|---|---|---|---|

| LigandScout | Ligand- & Structure-Based | Commercial | 3D pharmacophore modeling, virtual screening | Protein-ligand interaction analysis [19] |

| MOE | Ligand- & Structure-Based | Commercial | Molecular modeling, pharmacophore modeling, QSAR | Comprehensive drug discovery suite [19] |

| Pharmer | Ligand-Based | Open Source | Efficient pharmacophore search algorithms | Virtual screening of large libraries [19] |

| Align-it (Pharao) | Ligand-Based | Open Source | Aligning molecules and pharmacophore elucidation | Molecular similarity assessment [19] |

| Pharmit | Structure-Based | Free Web Server | Interactive pharmacophore modeling and screening | Virtual screening with exclusion volumes [19] [23] |

| PharmMapper | Structure-Based | Free Web Server | Reverse pharmacophore screening | Target identification [19] |

| DiffPhore | AI-Enhanced | Research | Knowledge-guided diffusion for 3D ligand-pharmacophore mapping | Predicting ligand binding conformations [20] |

| CMD-GEN | AI-Enhanced | Research | Coarse-grained pharmacophore sampling & molecular generation | Selective inhibitor design [26] |

Ligand-based and structure-based pharmacophore modeling represent complementary paradigms in computer-aided drug design, each with distinct strengths and optimal application domains. Ligand-based approaches excel when target structural information is unavailable but sufficient active compounds are known, while structure-based methods provide superior insights when protein structures are accessible, enabling identification of novel scaffolds [19] [21].

The evolving landscape of pharmacophore modeling increasingly favors integrated approaches that combine both methodologies, enhanced by artificial intelligence and deep learning techniques [22]. Frameworks like DiffPhore and CMD-GEN demonstrate how knowledge-guided generative models can overcome limitations of traditional methods, achieving superior performance in predicting binding conformations and designing selective inhibitors [20] [26].

As drug discovery faces increasing challenges with difficult targets and demands for rapid lead identification, the strategic combination of ligand-based and structure-based pharmacophore modeling—powered by AI advancements—will continue to provide valuable tools for navigating complex chemical spaces and accelerating therapeutic development [22].

In the field of computer-aided drug design, a pharmacophore is universally defined as the ensemble of steric and electronic features that is necessary to ensure optimal supramolecular interactions with a specific biological target and to trigger or block its biological response [4] [6]. This abstract representation serves as a powerful tool for identifying the essential molecular interactions responsible for bioactivity, independent of the underlying chemical scaffold. Pharmacophore models effectively distill the complex three-dimensional landscape of ligand-receptor interactions into a set of critical features—such as hydrogen bond donors, hydrogen bond acceptors, hydrophobic regions, and charged groups—that collectively define the requirements for biological activity [4]. By focusing on these key interactions, pharmacophore modeling enables scaffold hopping, where structurally distinct compounds possessing the same pharmacophoric features can be identified or designed, thereby expanding the chemical space for drug discovery [5] [4].

The utility of pharmacophore models extends across the entire drug discovery pipeline, from virtual screening and lead optimization to de novo molecular design [4]. The abstraction they provide allows researchers to bridge the gap between structural information and biological activity, making them indispensable for both ligand-based and structure-based drug design approaches. As computational methods continue to evolve, integrating pharmacophores with advanced techniques like deep learning and molecular dynamics simulations has further enhanced their predictive power and applicability in identifying novel bioactive compounds [5] [9] [8].

Comparative Performance of Pharmacophore Modeling Approaches

Pharmacophore models can be generated through several distinct methodologies, each with its own strengths, limitations, and optimal use cases. The three primary approaches are ligand-based, structure-based, and dynamics-informed pharmacophore modeling.

Ligand-based approaches rely on the structural alignment and common feature extraction from a set of known active compounds. These methods are particularly valuable when the three-dimensional structure of the target protein is unknown [28] [4]. The quality of ligand-based models heavily depends on the diversity and quality of the known actives used for model generation.

Structure-based approaches derive pharmacophore features directly from the analysis of a target protein's binding site, often using crystallographic structures of protein-ligand complexes [29] [4]. These models explicitly incorporate complementary chemical features from the binding site and can include exclusion volumes to represent steric constraints.

Dynamics-informed approaches represent an advanced evolution of structure-based methods that incorporate protein flexibility through molecular dynamics (MD) simulations [29] [8]. By sampling multiple conformational states, these models capture the dynamic nature of ligand-receptor interactions, potentially leading to more robust and biologically relevant pharmacophores.

Quantitative Performance Comparison

The performance of different pharmacophore modeling approaches can be quantitatively evaluated using metrics such as pharmacophoric similarity (Spharma), feature count deviation (Dcount), and virtual screening enrichment. The table below summarizes the comparative performance of various methods and tools based on recent studies:

Table 1: Performance Comparison of Pharmacophore Modeling Approaches and Tools

| Method/Model | Approach Type | Key Performance Metrics | Notable Advantages |

|---|---|---|---|

| TransPharmer [5] | Pharmacophore-informed generative AI | Superior Spharma in de novo generation; Produced a 5.1 nM PLK1 inhibitor (IIP0943) | Excellent scaffold hopping; High structural novelty in generated molecules |

| PGMG [9] | Pharmacophore-guided deep learning | High validity, uniqueness, and novelty scores; Strong docking affinities | Effective for targets with limited activity data; Flexible input requirements |

| MD-Refined Models [29] [8] | Dynamics-informed | ROC5% = 0.99 for CDK-2 screening [8]; Better feature discrimination | Accounts for protein flexibility; Improved distinction between actives/decoys |

| LigandScout-Based Models [8] | Structure-based | ROC5% = 0.89-0.94 for CDK-2 screening | High abstraction of interaction patterns; Suitable for chemically diverse ligands |

| Ligand-Based HipHopRefine [28] | Ligand-based | Enrichment factor of 8.2 for mPGES-1 inhibitors | Excellent discriminatory power; Effective even with congeneric series |

The performance data reveals several key trends. Generative models like TransPharmer and PGMG demonstrate remarkable capability in designing novel bioactive compounds with desired pharmacophoric properties, successfully bridging the gap between virtual screening and de novo design [5] [9]. Dynamics-informed approaches consistently outperform static structure-based methods in virtual screening enrichment, highlighting the importance of accounting for protein flexibility in pharmacophore model generation [29] [8]. Furthermore, specialized techniques like the Common Hit Approach (CHA) and Molecular dYnamics SHAred PharmacophorE (MYSHAPE) show particularly strong performance when multiple target-ligand complexes are available, with MYSHAPE achieving near-perfect enrichment (ROC5% = 0.99) in CDK-2 inhibitor screening [8].

Experimental Protocols for Pharmacophore Model Development and Validation

Structure-Based Pharmacophore Modeling with MD Refinement

Objective: To generate a dynamics-informed pharmacophore model that accounts for protein flexibility and provides enhanced virtual screening performance.

Materials and Receptors:

- Protein Data Bank (PDB) structure of target protein-ligand complex

- Molecular dynamics simulation software (e.g., GROMACS, AMBER)

- Pharmacophore modeling software (e.g., LigandScout)

- Virtual screening platform (e.g., Schrodinger Suite)

Methodology:

- System Preparation: Obtain the crystal structure of the protein-ligand complex from the PDB. Prepare the protein structure by adding hydrogen atoms, assigning proper bond orders, and correcting missing residues [29] [30].

- Molecular Dynamics Simulation: Perform MD simulations (typically 20 ns) using appropriate force fields and solvation models. Save snapshots at regular intervals throughout the simulation trajectory [29].

- Pharmacophore Generation: Convert MD trajectory snapshots to pharmacophore models using automated software. Generate a feature vector (bit string) for each pharmacophore model [8].

- Model Consensus: Apply the Common Hit Approach (CHA) by aggregating feature vectors and counting occurrence frequencies. For multiple complexes, use the MYSHAPE approach to identify persistent features across different systems [8].

- Validation: Validate the model using receiver operating characteristic (ROC) curve analysis against a database of known actives and decoys. Calculate enrichment factors to quantify screening performance [29] [8].

Key Considerations: This approach is particularly valuable for targets with significant conformational flexibility or when multiple ligand-complex structures are available. The integration of MD simulations helps resolve uncertainties in crystal structures and captures physiological protein dynamics [29].

Ligand-Based Pharmacophore Modeling for Virtual Screening

Objective: To develop a pharmacophore model from a set of known active compounds for virtual screening of novel chemotypes.

Materials and Compounds:

- A curated set of known active compounds (typically 4-10 structures) with biological activity data

- Molecular alignment and pharmacophore generation software (e.g., Catalyst HipHop)

- Database of compounds for virtual screening

Methodology:

- Training Set Selection: Curate a set of active compounds with varying potency levels. Assign priority levels based on activity (e.g., high potency compounds as priority 1) [28].

- Conformational Analysis: Generate representative conformational ensembles for each compound to ensure coverage of bioactive conformations.

- Common Feature Identification: Use algorithms to identify 3D spatial arrangements of chemical features common to active compounds. Features typically include hydrogen bond acceptors/donors, hydrophobic regions, and aromatic rings [28] [4].

- Model Refinement: Refine the model by excluding features not essential for activity. Incorporate shape constraints based on active compound volumes to enhance selectivity [28].

- Theoretical Validation: Screen against a test set containing known actives and inactives. Calculate enrichment factors and ROC curves to validate model discrimination capability [28] [30].

Key Considerations: Ligand-based models require structurally diverse actives for optimal performance. The inclusion of inactive compounds during validation helps verify the model's ability to distinguish true actives [28].

Pharmacophore-Guided Deep Learning for Molecular Generation

Objective: To generate novel bioactive molecules satisfying specific pharmacophore constraints using deep learning approaches.

Materials and Software:

- Chemical databases for training (e.g., ChEMBL)

- Pharmacophore fingerprinting tools

- Deep learning framework (e.g., PyTorch, TensorFlow)

Methodology:

- Training Data Preparation: Process SMILES representations from chemical databases. Generate pharmacophore fingerprints for each molecule using tools like RDKit [9].

- Model Architecture: Implement a graph neural network to encode spatially distributed pharmacophore features. Use a transformer decoder to generate molecular structures [5] [9].

- Latent Variable Integration: Introduce latent variables to model the many-to-many relationship between pharmacophores and molecules, enhancing output diversity [9].

- Model Training: Train the model to learn the mapping between pharmacophore constraints and molecular structures. Employ techniques like teacher forcing and attention mechanisms [5].

- Generation and Validation: Generate novel molecules conditioned on target pharmacophores. Evaluate generated structures using docking studies, synthetic accessibility metrics, and chemical novelty assessments [5] [9].

Key Considerations: This approach is particularly valuable for exploring novel chemical space and scaffold hopping. The integration of pharmacophore constraints ensures generated molecules maintain essential interaction features while exploring structural diversity [5].

Workflow Visualization of Pharmacophore-Based Drug Discovery

The following diagram illustrates the comprehensive workflow for pharmacophore-based drug discovery, integrating multiple modeling approaches and validation steps:

Diagram 1: Integrated Workflow for Pharmacophore-Based Drug Discovery

Essential Research Reagents and Computational Tools

Successful implementation of pharmacophore-based drug discovery requires specialized computational tools and resources. The following table details essential research reagents and their specific functions in the workflow:

Table 2: Essential Research Reagents and Computational Tools for Pharmacophore Modeling

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| LigandScout [29] [8] | Software | Automated structure-based pharmacophore generation | Interaction pattern analysis from protein-ligand complexes |

| Schrödinger Suite [30] | Software Platform | Protein preparation, molecular docking, pharmacophore modeling | Integrated drug design workflow implementation |

| RDKit [5] [9] | Cheminformatics Library | Pharmacophore fingerprint calculation and molecular processing | Open-source cheminformatics and descriptor generation |

| MD Simulation Software(GROMACS, AMBER) [29] | Computational Tool | Protein-ligand dynamics simulation | Dynamics-informed pharmacophore refinement |

| Protein Data Bank (PDB) [29] [30] | Structural Database | Source of 3D protein-ligand complex structures | Structure-based pharmacophore model development |

| DUD-E Database [29] | Benchmarking Database | Curated sets of actives and decoys for validation | Virtual screening performance assessment |

| ChEMBL [9] | Chemical Database | Bioactivity data and compound structures | Training set selection and model validation |

These tools collectively enable the entire pharmacophore modeling pipeline, from initial data preparation through model generation and validation. The selection of appropriate tools depends on the specific modeling approach, target characteristics, and available computational resources.

Pharmacophore modeling represents a powerful abstraction layer that distills complex molecular recognition processes into fundamental chemical interaction patterns. The comparative analysis presented in this guide demonstrates that while each pharmacophore modeling approach has distinct strengths, the integration of multiple methods—particularly through dynamics-informed refinement and deep learning—provides the most robust framework for identifying novel bioactive compounds. As the field advances, the convergence of pharmacophore modeling with AI-based generative methods and enhanced molecular dynamics simulations promises to further accelerate the discovery of structurally novel therapeutic agents with optimized bioactivity profiles.

Key Performance Metrics and Practical Applications in Drug Discovery

In the field of computer-aided drug design, virtual screening (VS) serves as a crucial technique for rapidly identifying potential hit compounds from extensive chemical libraries. The efficacy of these screening methods requires rigorous assessment using standardized quantitative metrics. Among these, the Enrichment Factor (EF) and Goodness-of-Hit (GH) score stand as two fundamental benchmarks for evaluating virtual screening performance [31]. EF quantifies the ability of a screening method to prioritize active compounds over inactive ones compared to random selection, providing a straightforward measure of early enrichment capability [32]. The GH score offers a more balanced assessment by incorporating both the yield of actives and the false-negative rate, providing a single value that reflects the overall effectiveness of a virtual screening campaign [31]. These metrics are particularly valuable for comparing diverse virtual screening approaches, including structure-based docking, ligand-based pharmacophore screening, and machine learning-based methods, across various protein targets and compound libraries.

Theoretical Foundations of EF and GH Scoring Metrics

Mathematical Definition of Enrichment Factor (EF)

The Enrichment Factor (EF) is calculated as the ratio between the fraction of active compounds identified in a selected top-ranked subset and the fraction of active compounds that would be expected from random selection. The mathematical expression for EF is:

[ EF = \frac{\left( \frac{{N}{\text{hit}}^{\text{selected}}}{{N}{\text{total}}^{\text{selected}}} \right)}{\left( \frac{{N}{\text{hit}}^{\text{total}}}{{N}{\text{total}}^{\text{total}}} \right)} ]

Where:

- ( {N}_{\text{hit}}^{\text{selected}} ) = number of active compounds in the selected subset

- ( {N}_{\text{total}}^{\text{selected}} ) = total number of compounds in the selected subset

- ( {N}_{\text{hit}}^{\text{total}} ) = total number of active compounds in the entire database

- ( {N}_{\text{total}}^{\text{total}} ) = total number of compounds in the entire database

EF values can be calculated at different fractions of the screened database (e.g., EF1%, EF5%, EF10%), with EF1% being particularly valuable for assessing early enrichment performance [32] [33]. For example, a study on PfDHFR inhibitors reported EF1% values reaching 28-31 for optimal docking and machine learning rescoring combinations, indicating excellent early enrichment capabilities [32].

Mathematical Definition of Goodness-of-Hit (GH) Score

The Goodness-of-Hit (GH) score provides a complementary metric that balances the yield of actives with the false-negative rate, offering a more comprehensive assessment of virtual screening performance. The GH score is defined as:

[ GH = \left( \frac{{H}{a}(3A + {H}{t})}{4 {H}{t} A} \right) \times \left( 1 - \frac{{H}{t} - {H}_{a}}{N - A} \right) ]

Where:

- ( {H}_{a} ) = number of active compounds in the selected subset (hits)

- ( {H}_{t} ) = total number of compounds in the selected subset

- ( A ) = total number of active compounds in the entire database

- ( N ) = total number of compounds in the entire database

The first term of the equation represents the enrichment capability, while the second term penalizes for the number of missed active compounds (false negatives). GH scores range from 0 to 1, with higher values indicating better overall performance [31].

Comparative Analysis of EF and GH Metrics

Table 1: Comparative characteristics of EF and GH scoring metrics

| Characteristic | Enrichment Factor (EF) | Goodness-of-Hit (GH) Score |

|---|---|---|

| Primary Focus | Early enrichment capability | Balanced performance assessment |

| Calculation | Ratio-based | Multiplicative combination of enrichment and coverage |

| Sensitivity to Database Size | Moderate | Moderate to high |

| False Negative Consideration | No | Yes |

| Typical Application | Initial screening optimization | Comprehensive method validation |

| Value Range | 0 to maximum theoretical enrichment | 0 to 1 |

| Dependence on Active Compound Ratio | High | High |

Experimental Protocols for EF and GH Assessment

Standard Benchmarking Workflow

The evaluation of virtual screening methods using EF and GH scores follows a standardized benchmarking workflow that ensures consistent and comparable results across studies. This protocol typically employs benchmark datasets containing known bioactive molecules and structurally similar but inactive molecules (decoys) for specific protein targets [32]. The DEKOIS 2.0 benchmark set is one such widely used resource that provides challenging decoy sets for various protein targets with a typical active-to-decoy ratio of 1:30 [32]. The screening performance is determined by the method's ability to prioritize known bioactive molecules over decoys, with effectiveness quantified through EF and GH calculations at various screening thresholds.

Diagram 1: Virtual Screening Benchmarking Workflow. This flowchart illustrates the standard experimental protocol for evaluating virtual screening performance using EF and GH metrics.

Performance Evaluation in Structure-Based Pharmacophore Modeling

In structure-based pharmacophore modeling studies, EF and GH scores play a critical role in model selection and validation. A recent study on GPCR-targeted pharmacophore models demonstrated a rigorous approach where pharmacophore models were generated in experimentally determined and modeled structures of 13 target GPCRs with known active ligands [31]. The performance assessment involved calculating both EF and GH scoring metrics to determine pharmacophore model performance, with particular emphasis on EF due to its relevance to experimental workflows [31]. The study implemented a "cluster-then-predict" machine learning workflow to identify pharmacophore models likely to possess higher enrichment values, achieving positive predictive values of 0.88 and 0.76 for selecting high-enrichment pharmacophore models from experimentally determined and modeled structures, respectively [31].

Advanced Statistical Considerations

Recent methodological advances have highlighted the importance of proper statistical inference when comparing enrichment metrics. The uncertainty associated with estimating enrichment curves can be substantial, particularly at the small testing fractions that interest researchers most [33]. Appropriate inference must account for two often-overlooked sources of correlation: correlation across different testing fractions within a single algorithm, and correlation between competing algorithms [33]. For pointwise comparisons at specific testing fractions, the EmProc hypothesis testing approach has been found to be most effective, while for inference along entire curves, EmProc-based confidence bands are recommended for simultaneous coverage with minimal width [33].

Comparative Performance Data Across Virtual Screening Methods

Docking and Machine Learning Rescoring Performance

Table 2: Performance comparison of docking tools with machine learning rescoring for PfDHFR variants

| Screening Method | Variant | EF1% | Key Findings |

|---|---|---|---|

| AutoDock Vina + RF-Score | Wild-Type PfDHFR | Improved from worse-than-random | Significant improvement with ML rescoring [32] |

| AutoDock Vina + CNN-Score | Wild-Type PfDHFR | Improved from worse-than-random | Significant improvement with ML rescoring [32] |

| PLANTS + CNN-Score | Wild-Type PfDHFR | 28 | Best enrichment for wild-type variant [32] |

| FRED + CNN-Score | Quadruple-Mutant PfDHFR | 31 | Best enrichment for resistant variant [32] |

| Traditional Scoring (Reference) | Both | <10 | Lower than ML-enhanced approaches [32] |

Recent benchmarking studies against both wild-type and drug-resistant variants of Plasmodium falciparum dihydrofolate reductase (PfDHFR) have demonstrated the substantial performance gains achievable through machine learning rescoring of traditional docking outputs. The comprehensive analysis evaluated three docking tools (AutoDock Vina, PLANTS, and FRED) against both wild-type and quadruple-mutant PfDHFR variants, with subsequent rescoring using two pretrained machine learning scoring functions (CNN-Score and RF-Score-VS v2) [32]. The results revealed that rescoring with CNN-Score consistently augmented the structure-based virtual screening performance and enriched diverse, high-affinity binders for both PfDHFR variants [32]. This approach offers important endorsements for improving malaria drug discovery, especially against highly resistant variants.

Ligand-Based Virtual Screening Performance

Ligand-based virtual screening approaches have also demonstrated competitive performance using EF as a key metric. A study on shape-based screening with a novel scoring function (HWZ score) reported an average EF that significantly outperformed traditional similarity search methods [34]. When tested against 40 protein targets in the Directory of Useful Decoys (DUD) database, the HWZ score-based virtual screening approach achieved an average hit rate of 46.3% ± 6.7% at the top 1% of screened compounds [34]. This performance substantially exceeds the typical 1-5% hit rates observed in high-throughput experimental screening, demonstrating the value of sophisticated virtual screening approaches.

Support Vector Machines for Virtual Screening

Support vector machines (SVM) have emerged as powerful ligand-based virtual screening tools, with demonstrated capability to achieve high enrichment factors when screening large compound libraries. In a comprehensive assessment, SVM models were developed for identifying active compounds of single mechanisms (HIV protease inhibitors, DHFR inhibitors, dopamine antagonists) and multiple mechanisms (CNS active agents) [35]. When screening libraries of 2.986 million compounds from the PUBCHEM database, the SVM approach achieved impressive performance metrics with yields of 52.4-78.0%, hit rates of 4.7-73.8%, and enrichment factors of 214-10,543 [35]. These results compare favorably with structure-based virtual screening (yields: 62-95%, hit rates: 0.65-35%, enrichment factors: 20-1200) and other ligand-based virtual screening tools (yields: 55-81%, hit rates: 0.2-0.7%, enrichment factors: 110-795) when screening libraries of ≥1 million compounds [35].

Table 3: Key research reagents and computational tools for virtual screening performance assessment

| Tool/Resource | Type | Primary Function | Application in EF/GH Studies |

|---|---|---|---|

| DEKOIS 2.0 | Benchmark Dataset | Provides known actives and challenging decoys | Standardized performance assessment [32] |

| Directory of Useful Decoys (DUD) | Benchmark Dataset | Curated active-inactive pairs for 40+ targets | Method validation and comparison [34] |

| AutoDock Vina | Docking Software | Molecular docking with traditional scoring | Baseline docking performance [32] |

| PLANTS | Docking Software | Protein-ligand docking with ant colony optimization | Comparative docking studies [32] |

| FRED | Docking Software | Exhaustive rigid-body docking | High-performance docking evaluations [32] |

| CNN-Score | Machine Learning | Neural network-based binding affinity prediction | Docking pose rescoring and performance enhancement [32] |

| RF-Score-VS | Machine Learning | Random forest-based virtual screening | Improved enrichment in large library screening [32] |

| ROCS | Shape-Based Screening | Rapid overlay of chemical structures | Ligand-based screening benchmark [34] |

| Support Vector Machines | Machine Learning | Binary classification of active/inactive compounds | High-enrichment screening in large libraries [35] |

| LIT-PCBA | Benchmark Dataset | 15 targets with confirmed actives and inactives | Pharmacophore model validation [10] |

The rigorous assessment of virtual screening performance through Enrichment Factor and Goodness-of-Hit scores remains fundamental to advancing computational drug discovery. The comparative data presented in this guide demonstrates that while traditional docking methods provide reasonable baseline performance, their effectiveness can be substantially enhanced through machine learning rescoring approaches, with EF1% values improving from worse-than-random to 28-31 in optimized pipelines [32]. Ligand-based methods, including sophisticated shape-based screening and support vector machines, continue to offer competitive performance, particularly through their computational efficiency and ability to maintain high enrichment factors when screening extremely large compound libraries [34] [35]. The ongoing development of benchmark datasets and standardized assessment protocols ensures that performance claims can be objectively validated across different screening methodologies and target classes. As virtual screening continues to evolve, EF and GH scores will maintain their position as essential metrics for guiding method selection and optimization in structure-based drug design.

The Receiver Operating Characteristic (ROC) curve is a fundamental graphical tool for evaluating the performance of binary classification models, with extensive applications in assessing pharmacophore model quality in drug discovery [36]. By plotting the True Positive Rate (TPR) against the False Positive Rate (FPR) across all possible classification thresholds, the ROC curve visually represents the trade-off between a model's sensitivity and its false alarm rate [37] [38]. The Area Under the Curve (AUC) provides a single scalar value that summarizes the overall ability of the model to discriminate between positive and negative cases, with a value of 1.0 representing perfect classification and 0.5 representing performance equivalent to random guessing [39] [40].

These metrics are particularly valuable in pharmacophore research because they offer critical threshold-invariance and scale-invariance properties [38]. Threshold invariance means the evaluation isn't dependent on a single arbitrary probability cutoff for classifying compounds as active or inactive, which is essential when screening large chemical databases where the optimal threshold may vary based on project goals. Scale invariance ensures that models predicting on different probability scales can be directly compared, as the metric focuses on the ranking of predictions rather than their absolute values [38]. This makes ROC-AUC ideal for objectively comparing different pharmacophore models and virtual screening strategies.

Theoretical Foundations and Interpretation

Key Metrics and Calculations

The construction and interpretation of ROC curves rely on several fundamental metrics derived from the confusion matrix [41]. The True Positive Rate (TPR), also called sensitivity or recall, measures the proportion of actual active compounds correctly identified as active by the model [38]. The False Positive Rate (FPR) represents the proportion of inactive compounds incorrectly classified as active [38]. These metrics are calculated as follows:

- TPR = TP / (TP + FN)

- FPR = FP / (FP + TN)

where TP = True Positives, FN = False Negatives, FP = False Positives, and TN = True Negatives [40].

The AUC has a compelling probabilistic interpretation: it equals the probability that the model will rank a randomly chosen positive instance (e.g., an active compound) higher than a randomly chosen negative instance (e.g., an inactive compound) [37] [40]. For a pharmacophore model, this means that an AUC of 0.8 indicates an 80% probability that the model will assign a higher score to a randomly selected active compound than to a randomly selected inactive compound during virtual screening [37].

AUC Interpretation Guidelines

The AUC value provides a standardized measure for classifying model performance, with established interpretation guidelines in diagnostic and predictive modeling [39]:

Table 1: Clinical Interpretation of AUC Values

| AUC Value | Interpretation Suggestion |

|---|---|

| 0.9 ≤ AUC | Excellent |

| 0.8 ≤ AUC < 0.9 | Considerable |

| 0.7 ≤ AUC < 0.8 | Fair |

| 0.6 ≤ AUC < 0.7 | Poor |

| 0.5 ≤ AUC < 0.6 | Fail |

These classifications provide researchers with a common framework for evaluating pharmacophore model performance. However, it's crucial to consider the 95% confidence interval alongside the point estimate of the AUC, as a wide interval indicates substantial uncertainty in the performance estimate [39]. Statistical tests such as the DeLong test should be used when formally comparing AUC values between different models to determine if observed differences are statistically significant [39] [42].

Application to Pharmacophore Model Assessment

Validating Virtual Screening Performance

In pharmacophore-based virtual screening, ROC-AUC analysis serves as a primary method for quantifying a model's ability to enrich active molecules in virtual hit lists compared to random selection [36]. The screening process involves applying a pharmacophore model to large chemical libraries to identify compounds that match its spatial and chemical features [36]. The resulting rankings of compounds (from most to least likely to be active) form the basis for ROC curve construction.

High-quality pharmacophore models typically achieve significantly higher hit rates (often 5-40%) compared to random screening (typically <1%) when applied to diverse compound libraries [36]. The ROC-AUC metric quantifies this enrichment capability by measuring how well the model separates known active compounds from inactive ones across all possible score thresholds. This provides researchers with an objective, quantitative basis for selecting the most promising pharmacophore models before proceeding to costly experimental validation.

Experimental Design for Model Validation

Proper experimental design is essential for obtaining meaningful ROC-AUC values when validating pharmacophore models. The validation dataset must be carefully curated to include only compounds with experimentally confirmed activity data from target-based binding or enzyme activity assays [36]. Cell-based assay data should be avoided for validation purposes, as effects may result from mechanisms other than the intended target interaction [36].

The validation set should include structurally diverse molecules with appropriate activity cutoffs to exclude compounds with weak binding affinity [36]. When known inactive compounds are limited, decoy molecules with similar physicochemical properties but different topologies can be generated using resources like the Directory of Useful Decoys, Enhanced (DUD-E) [36]. A recommended ratio of approximately 1:50 active compounds to decoys helps simulate real-world screening conditions where active compounds are rare among large chemical libraries [36].

Figure 1: Workflow for Pharmacophore Model Validation Using ROC-AUC

Comparative Performance Data

Benchmarking Against Other Methods

ROC-AUC enables direct comparison of pharmacophore-based virtual screening against other lead identification methods. The following table summarizes typical performance ranges observed in prospective virtual screening studies:

Table 2: Performance Comparison of Screening Methods

| Screening Method | Typical Hit Rate | Key Advantages | Common AUC Range |

|---|---|---|---|

| Pharmacophore-Based VS | 5-40% [36] | High interpretability, structure-based insights | 0.7-0.9 [36] |

| High-Throughput Screening | <1% [36] | Experimental data, no model bias | 0.5 (random) |

| Deep Learning Generators (e.g., PGMG) | N/A (generation) | Novel chemical space exploration | Varies by target [9] |

The substantial advantage of pharmacophore-based approaches is evident in their significantly higher hit rates compared to random high-throughput screening. For example, specific targets have demonstrated particularly low random hit rates: glycogen synthase kinase-3β (0.55%), PPARγ (0.075%), and protein tyrosine phosphatase-1B (0.021%) [36]. Pharmacophore models that achieve AUC values above 0.8 for these targets would thus provide massive enrichment over random screening approaches.

Performance in Different Application Contexts