2D vs 3D QSAR: A Comprehensive Comparison for Modern Drug Discovery

This article provides a thorough analytical comparison between 2D and 3D Quantitative Structure-Activity Relationship (QSAR) methodologies, addressing key considerations for researchers and drug development professionals.

2D vs 3D QSAR: A Comprehensive Comparison for Modern Drug Discovery

Abstract

This article provides a thorough analytical comparison between 2D and 3D Quantitative Structure-Activity Relationship (QSAR) methodologies, addressing key considerations for researchers and drug development professionals. We explore the foundational principles distinguishing descriptor-based and spatial modeling approaches, examine their methodological implementations across therapeutic areas including cancer, infectious diseases, and neurological disorders, and analyze practical optimization strategies integrating machine learning and AI. The content delivers critical validation frameworks and performance comparisons to guide method selection, supported by recent case studies demonstrating successful applications in lead optimization and overcoming drug resistance. This resource aims to equip scientists with the knowledge to strategically implement QSAR approaches that accelerate and enhance their drug discovery pipelines.

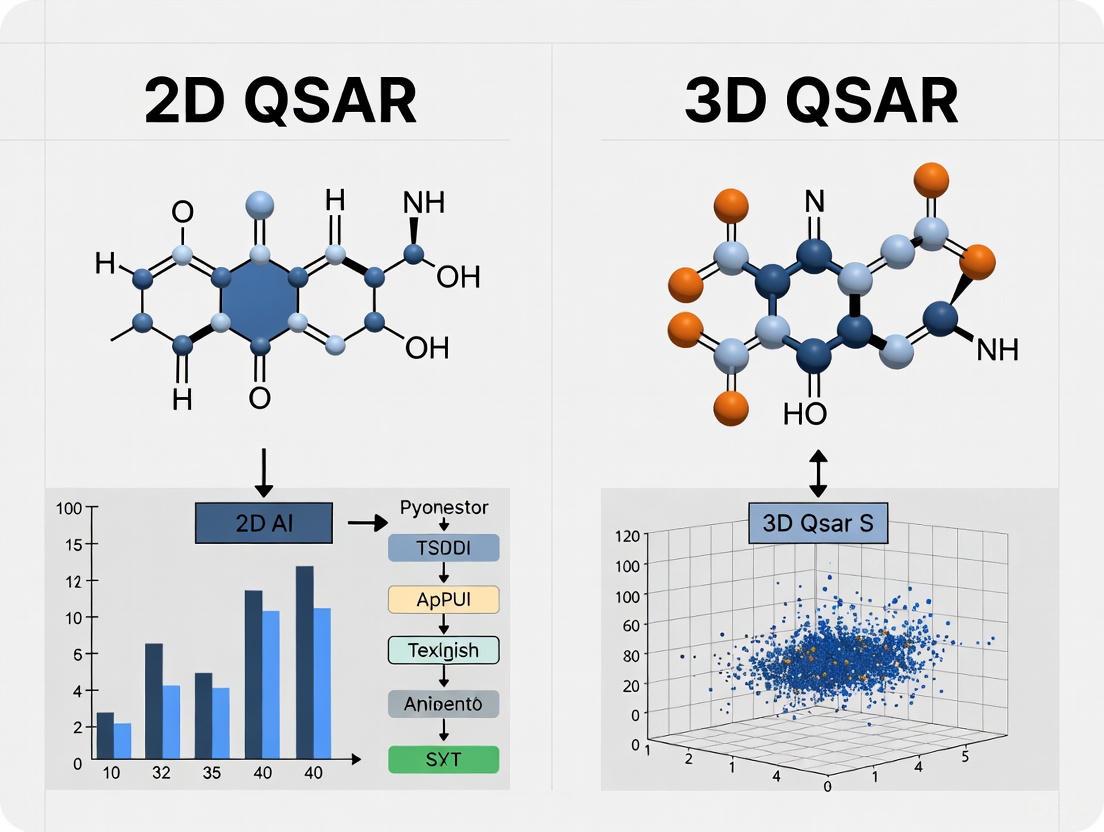

Understanding QSAR Dimensions: From Molecular Descriptors to 3D Fields

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone computational approach in chemical and pharmaceutical research that mathematically correlates molecular structural features with biological activity or chemical properties. Among QSAR methodologies, 2D-QSAR stands as a fundamental approach that utilizes two-dimensional molecular descriptors to build predictive models without requiring three-dimensional structural information. This guide examines the historical context, core principles, and methodological framework of 2D-QSAR modeling while objectively comparing its performance capabilities against contemporary 3D-QSAR approaches. Through systematic analysis of experimental protocols, validation metrics, and case studies across therapeutic domains, we provide researchers with a comprehensive reference for selecting and implementing appropriate QSAR strategies in drug discovery pipelines.

Historical Development and Theoretical Foundations

The conceptual foundation of QSAR emerged in the 1960s with the pioneering work of Corwin Hansch and Toshio Fujita, who established the first systematic approach to correlate biological activity with physicochemical parameters through linear free-energy relationships. This Hansch analysis paradigm represented the genesis of modern 2D-QSAR by demonstrating that substituent effects on biological activity could be quantified using parameters such as hydrophobicity (logP) and electronic properties (Hammett constants) [1]. The subsequent decades witnessed substantial methodological evolution, with the introduction of topological descriptors encoding molecular connectivity patterns and the development of increasingly sophisticated statistical approaches for model building [1].

The core theoretical premise of 2D-QSAR rests upon the fundamental principle that biological activity (or chemical properties) of compounds can be expressed as a mathematical function of their structural features, formally represented as:

Activity = f(physicochemical properties and/or structural properties) + error [1]

Unlike its 3D counterpart, 2D-QSAR does not require molecular alignment or conformational analysis, instead relying exclusively on descriptors derived from molecular graph representations that are invariant to rotation and translation [1] [2]. This descriptor-based approach calculates molecular features directly from the 2D molecular structure, encompassing a wide spectrum of physicochemical, topological, and electronic parameters that collectively encode information critical for biological interactions [3] [2].

Core Principles and Methodological Framework of 2D-QSAR

Essential Molecular Descriptors in 2D-QSAR

2D-QSAR modeling employs an extensive array of molecular descriptors that quantitatively encode structural information. These descriptors are computationally derived directly from the 2D molecular structure without spatial conformation and can be categorized into several distinct classes based on the structural properties they represent [3] [1] [2].

Table 1: Classification of Fundamental 2D-QSAR Molecular Descriptors

| Descriptor Category | Specific Examples | Structural Interpretation | Biological Relevance |

|---|---|---|---|

| Physicochemical Properties | LogP, molar refractivity, polar surface area, molecular weight | Hydrophobicity, bulkiness, polarity, size | Membrane permeability, solubility, absorption |

| Topological Descriptors | Connectivity indices (order 0, 1, 2), Wiener index, Zagreb index | Molecular branching, shape, atom connectivity | Molecular recognition, binding affinity |

| Electrostatic Descriptors | Partial atomic charges, HOMO/LUMO energies, ionization potential, electron affinity | Electron density distribution, nucleophilicity/electro-philicity | Electronic interactions, reaction mechanisms |

| Geometrical Descriptors | Molecular volume, surface area, shadow indices | Molecular size and shape | Steric complementarity to biological targets |

| Quantum Chemical Descriptors | Heat of formation, dipole moment, dipole energy [3] | Electronic state, energy, reactivity | Binding interactions, reaction rates |

The 2D-QSAR Model Development Workflow

The construction of a robust 2D-QSAR model follows a systematic, iterative process comprising distinct stages from data collection through model validation. The following diagram illustrates this standardized workflow:

Figure 1: Standardized workflow for developing 2D-QSAR models, illustrating the sequential stages from data collection to model application.

Data Collection and Preprocessing

The initial phase involves assembling a structurally diverse dataset of compounds with corresponding biological activity values, typically expressed as half-maximal inhibitory concentration (IC₅₀), minimum inhibitory concentration (MIC), or other potency measures [3] [4]. These experimental values are converted to logarithmic scale (pIC₅₀ = -logIC₅₀) to linearize the relationship with free energy changes [4]. The dataset is then partitioned into training and test sets, typically following an 70:30 to 80:20 ratio, to ensure sufficient compounds for model development while retaining an external validation subset [4] [5].

Molecular Optimization and Descriptor Calculation

Molecular structures undergo computational optimization using molecular mechanics (MM2/MM3) or semi-empirical quantum chemical methods (AM1, PM3) to achieve minimum energy conformations [3] [5]. Subsequently, specialized software platforms calculate a comprehensive set of 2D descriptors encompassing constitutional, topological, geometrical, and quantum chemical parameters [3] [2]. Descriptor redundancy is addressed through elimination of constant or near-constant variables and correlation analysis to remove highly intercorrelated descriptors that can lead to model overfitting [2].

Model Development and Validation

Statistical techniques ranging from multiple linear regression (MLR) to advanced machine learning methods (Random Forest, Support Vector Machines, Neural Networks) are employed to establish mathematical relationships between descriptors and biological activity [6] [2] [7]. Model robustness is evaluated using internal validation techniques such as leave-one-out (LOO) or leave-group-out (LGO) cross-validation, while predictive ability is assessed using the external test set [3] [6] [4]. Additional validation through Y-scrambling ensures the absence of chance correlations [1].

Experimental Protocols and Validation Metrics

Case Study: 2D-QSAR Model for Anti-Tuberculosis Compounds

A representative 2D-QSAR investigation was conducted on natural products and derivatives with anti-tuberculosis activity against Mycobacterium tuberculosis [3]. The study compiled 79 compounds with reported minimum inhibitory concentration (MIC) values, which were converted to -log(MIC) to serve as the dependent variable. Molecular structures were optimized using the MO-G computational application with augmented Molecular Mechanics (MM2/MM3) parameters, with minimization continuing until energy changes fell below 0.001 kcal/mol or after 300 iterations [3].

The researchers calculated 42 different physicochemical descriptors using the QSAR module of Scigress Explorer software, followed by forward feed multiple linear regression with leave-one-out cross-validation to identify the most significant descriptors [3]. The resulting model demonstrated a relationship correlating measure of 74% (R² = 0.74) and predictive accuracy of 72% (R²CV = 0.72), with dipole energy and heat of formation identified as the most significant descriptors correlating with anti-tubercular activity [3].

Case Study: 2D-QSAR Model for NSCLC Therapeutic Agents

In a separate study focusing on non-small cell lung cancer (NSCLC), researchers developed a 2D-QSAR model using 45 tetrahydropyrazolo-quinazoline and tetrahydropyrazolo-pyrimidocarbazole derivatives [4]. Antiproliferative activities against A549 NSCLC cell lines (IC₅₀ values) were converted to pIC₅₀ using the relationship pIC₅₀ = -log(IC₅₀ × 10⁻⁶) [4].

The resulting model exhibited strong predictive performance with assessment parameters R² = 0.798, adjusted R² = 0.754, cross-validated Q² = 0.673, and external test set R² = 0.531 [4]. These validation metrics comprehensively demonstrate model robustness and predictive capability for identifying novel NSCLC therapeutic agents.

Comparative Analysis: 2D-QSAR versus 3D-QSAR

Performance Metrics Across Methodologies

Direct comparison of 2D and 3D-QSAR approaches reveals distinct advantages and limitations for each methodology. The following table synthesizes performance data from multiple studies to facilitate objective comparison:

Table 2: Comparative Performance Analysis of 2D-QSAR and 3D-QSAR Approaches Across Multiple Studies

| Study Context | QSAR Method | Statistical Performance | Key Advantages | Methodological Limitations |

|---|---|---|---|---|

| SARS-CoV-2 Mpro Inhibitors [7] | 2D-QSAR (MLP with Morgan fingerprints) | R² training=1.00, q² CV=0.80, R² test=0.72 | No molecular alignment required, rapid virtual screening | Limited insight into 3D structural requirements |

| 3D-QSAR (Field QSAR) | R² training=0.96, q² CV=0.81, R² test=0.71 | Visualizes 3D pharmacophoric requirements | Alignment-sensitive, computationally intensive | |

| Histamine H3 Receptor Antagonists [6] | 2D-QSAR (MLR) | MAPE=2.9-3.6, SDEP=0.31-0.36 | Simpler implementation, comparable to advanced methods | Limited to QSAR prediction only |

| 2D-QSAR (ANN) | MAPE=2.9-3.6, SDEP=0.31-0.36 | Captures non-linear relationships | "Black box" interpretation challenges | |

| 3D-QSAR (HASL) | Inferior to 2D methods | - | Lower predictive accuracy in this application | |

| Organic Compound Degradation [8] | 2D-QSAR | R²=0.898, q²=0.841, Q²ext=0.968 | Excellent predictive power for reaction rates | Limited 3D interaction insights |

| 3D-QSAR (CoMSIA) | R²=0.952, q²=0.951, Q²ext=0.970 | Superior statistical fit, electrostatic field analysis | Requires conformer generation and alignment | |

| Dipeptide-Alkylated Nitrogen-Mustard Compounds [5] | 2D-QSAR (Linear HM) | R²=0.798 (from other study [4]) | Identifies key electronic descriptors | Limited to linear relationships |

| 2D-QSAR (Non-linear GEP) | Training R²=0.95, Test R²=0.87 | Captures complex non-linear patterns | Complex model interpretation | |

| 3D-QSAR (CoMSIA) | N/A provided | Visual guidance for molecular modification | Requires reliable alignment rules |

Methodological Differentiation and Selection Criteria

The comparative analysis reveals that 2D-QSAR approaches generally excel in predictive accuracy for biological activity when 3D structural requirements are either well-encoded in 2D descriptors or when the dataset contains congeneric series with conserved binding modes [6] [7]. The strength of 2D-QSAR lies in its computational efficiency, minimal pre-processing requirements, and exceptional suitability for high-throughput virtual screening of large chemical libraries [2].

Conversely, 3D-QSAR methodologies provide superior structural insights and stereochemical guidance for molecular modification, particularly valuable during lead optimization phases when detailed understanding of steric and electrostatic requirements is necessary [8] [7]. However, this enhanced structural insight comes with increased computational complexity, alignment sensitivity, and conformational dependence that can introduce variability in model performance [8] [5].

Selection between these approaches should be guided by specific research objectives: 2D-QSAR is recommended for rapid activity prediction and virtual screening applications, while 3D-QSAR is more appropriate for molecular design and optimization tasks requiring spatial understanding of structure-activity relationships [7].

Essential Research Reagents and Computational Tools

Successful implementation of 2D-QSAR modeling requires both computational tools and cheminformatics resources. The following table details essential solutions for conducting 2D-QSAR research:

Table 3: Essential Research Reagent Solutions for 2D-QSAR Modeling

| Tool Category | Specific Solutions | Application Function | Key Features |

|---|---|---|---|

| Molecular Modeling Platforms | Scigress Explorer [3], HyperChem [5] | Molecular structure optimization and descriptor calculation | MM2/MM3 force fields, semi-empirical methods (AM1, PM3) |

| Descriptor Calculation Software | CODESSA [5], RDKit [2] [7] | Comprehensive descriptor calculation | Constitutional, topological, electrostatic, quantum chemical descriptors |

| Statistical Analysis Environments | Flare Python API [2], Scikit-learn | Model development and validation | Multiple Linear Regression, Random Forest, SVM, Neural Networks |

| Cheminformatics Toolkits | RDKit [7], OpenBabel | Molecular representation and manipulation | SMILES parsing, fingerprint generation, substructure search |

| Validation Tools | Custom Python/R scripts [2], QSAR model validation scripts | Model robustness assessment | Cross-validation, Y-scrambling, applicability domain analysis |

2D-QSAR maintains a fundamental position in the computational chemistry toolbox, offering robust predictive capability for biological activity and chemical properties through efficient descriptor-based modeling. Its historical development reflects continuous methodological refinement, while its core principles remain grounded in establishing quantitative relationships between structural features and biological responses. The comparative analysis presented herein demonstrates that 2D-QSAR delivers predictive performance comparable to, and in some cases superior to, 3D-QSAR approaches while requiring fewer computational resources and avoiding complex molecular alignment procedures.

For research applications prioritizing high-throughput screening and rapid activity prediction, 2D-QSAR represents an optimal methodology, particularly during early discovery phases. The integration of modern machine learning approaches with traditional 2D descriptors continues to expand the capabilities of this established paradigm, ensuring its ongoing relevance in contemporary drug discovery workflows. As computational power increases and descriptor sets become more sophisticated, 2D-QSAR methodology will continue to evolve, maintaining its essential role in rational drug design and chemical property prediction.

Theoretical Foundations: Beyond Flatland - The Shift from 2D to 3D Descriptors

Quantitative Structure-Activity Relationship (QSAR) methodologies are foundational to contemporary drug design, enabling the prediction of a molecule's biological activity based on its chemical and physical characteristics. While classical 2D-QSAR correlates one- or two-dimensional molecular descriptors (e.g., molecular weight, logP) with biological activity, 3D-QSAR represents a natural extension that exploits the three-dimensional properties of the ligands to build more predictive and mechanistically insightful models [9] [10]. This paradigm shift is crucial because drug interactions occur in three-dimensional space; a molecule's biological effect is determined not just by its constituent atoms, but by their precise spatial arrangement and the resulting molecular fields [10].

The core principle of 3D-QSAR is that the difference in the three-dimensional structural properties of molecules is responsible for variations in their biological activities [9]. These methods apply empirical force field calculations on three-dimensionally aligned ligand structures, allowing for the analysis of steric, electrostatic, hydrophobic, and hydrogen-bonding fields surrounding the molecules [11] [12]. This provides a superior level of insight compared to 2D methods, as it helps identify specific regions in space where particular molecular features enhance or diminish biological activity.

A Comparative Framework: 2D-QSAR vs. 3D-QSAR

The choice between 2D and 3D approaches hinges on the research question, available data, and desired outcome. The table below summarizes their core distinctions.

| Feature | 2D-QSAR | 3D-QSAR |

|---|---|---|

| Molecular Representation | 1D and 2D descriptors (e.g., logP, molecular weight, topological indices) [10]. | 3D structure and conformation-dependent fields (e.g., steric, electrostatic) [10] [12]. |

| Descriptor Basis | Physicochemical, electronic, and topological properties derived from molecular formula or graph [10]. | Interaction energies (steric, electrostatic) calculated between a probe and the molecule at grid points [12]. |

| Core Requirement | A set of compounds with known activity [10]. | A set of compounds with known activity and a valid spatial alignment rule [12]. |

| Key Advantage | Computationally fast; does not require molecular conformation or alignment [10]. | Provides visual, interpretable maps of favorable/unfavorable chemical regions [13] [12]. |

| Primary Limitation | Lacks spatial context, limiting mechanistic insight for receptor-based design [9]. | Highly dependent on the correctness of molecular alignment and conformation choice [12]. |

| Typical Applications | Early-stage profiling for ADMET properties, rapid virtual screening [10] [14]. | Lead optimization, understanding structure-activity relationships, and designing novel analogs [9] [12]. |

Performance and Predictive Power: Experimental Comparisons

Direct comparisons in scientific studies reveal that the performance of 2D and 3D methods can be context-dependent.

Case Study 1: Predicting H3 Receptor Antagonist Binding A study on arylbenzofuran-derived histamine H3 receptor antagonists compared Multiple Linear Regression (MLR- a 2D method), Artificial Neural Networks (ANN- a more complex 2D method), and HASL (a 3D-QSAR method). The results demonstrated that the simpler 2D method was highly competitive [6].

- MLR (2D): Mean Absolute Percentage Error (MAPE) = 2.9-3.6, Standard Deviation of Error of Prediction (SDEP) = 0.31-0.36 [6].

- ANN (2D): MAPE = 2.9-3.6, SDEP = 0.31-0.36 [6].

- HASL (3D): Results "were not as good as those obtained by 2D methods" [6].

The study concluded that "simple traditional approaches such as MLR method can be as reliable as... more advanced and sophisticated methods like ANN and 3D-QSAR analyses" [6].

Case Study 2: Corrosion Inhibition by Pyrazole Derivatives In contrast, research on pyrazole corrosion inhibitors showed that modern machine learning models applied to 3D descriptors can achieve excellent predictive performance, sometimes surpassing their 2D counterparts [15].

- XGBoost with 2D Descriptors: Training set R² = 0.96; Test set R² = 0.75 [15].

- XGBoost with 3D Descriptors: Training set R² = 0.94; Test set R² = 0.85 [15].

This indicates that for this specific dataset, the 3D descriptor model generalized better to the test set, showing higher predictive R².

The 3D-QSAR Workflow: From Structures to Predictive Models

The development of a robust 3D-QSAR model follows a meticulous, multi-stage process. The workflow below outlines the critical steps from initial data preparation to final model application.

Detailed Experimental Protocols

Step 1: Conformational Analysis and Selection The first critical step is generating a biologically relevant 3D conformation for each molecule. This often involves energy minimization using molecular mechanics (MM) force fields (e.g., MM3, MM+) or semi-empirical methods (e.g., AM1) to find low-energy conformers [11] [12]. For molecules with flexible rotatable bonds, a systematic scan of dihedral angles may be performed to reflect energetically preferred conformations, as the choice of conformation can profoundly impact the model's quality [12].

Step 2: Molecular Alignment This is the most crucial step that defines the model's frame of reference. Molecules must be superimposed in 3D space based on a common hypothesis. Common protocols include:

- Common Substructure Alignment: A shared core structure (e.g., a pyridinium ring in MNVP+ analogs) is used for fitting atoms [12].

- Pharmacophore-Based Alignment: Molecules are aligned based on their shared pharmacophoric features (e.g., hydrogen bond donors/acceptors, aromatic rings).

- Database Mining: Using the

Select KBestapproach or Genetic Algorithm-Partial Least Squares (GA-PLS) to select the most relevant descriptors from a large pool (e.g., >1000 descriptors from software like Dragon) for model building [15] [11].

Step 3: Field Calculation and Model Building The aligned molecules are placed in a 3D grid. A probe atom (e.g., an sp³ carbon with a +1 charge) is used to calculate steric (Lennard-Jones) and electrostatic (Coulombic) interaction energies at each grid point. These interaction energies become the independent variables (descriptors) for the model [12]. Statistical methods like Partial Least Squares (PLS) are then used to correlate these field variables with the biological activity data [10] [12]. Modern implementations may also use advanced machine learning techniques like Gaussian Process Regression (GPR) or similarity descriptors based on molecular shape and electrostatics to build consensus models [13].

The Scientist's Toolkit: Essential Reagents and Software

A range of specialized software is available to execute the complex workflow of 3D-QSAR modeling.

| Tool Name | Type | Primary Function in 3D-QSAR |

|---|---|---|

| OpenEye's 3D-QSAR [13] | Commercial Software | Creates consensus models using shape/electrostatic similarity descriptors (ROCS, EON) and machine learning (kPLS, GPR). Valued for interpretable visual results. |

| 3D-QSAR.com [16] | Web Platform | Offers user-friendly, web-based tools for developing ligand-based and structure-based 3D-QSAR models and managing molecular datasets. |

| SYBYL (with CoMFA/CoMSIA) [12] | Commercial Suite | The classic industry platform for performing Comparative Molecular Field Analysis (CoMFA) and Comparative Molecular Similarity Indices Analysis (CoMSIA). |

| QSAR Toolbox [17] | Freemium Software | A data-rich platform primarily for chemical hazard assessment, supporting category formation, read-across, and the ability to run external QSAR models. |

| Open3DQSAR [14] | Open-Source Tool | Provides transparent 3D-QSAR analysis for scientists who prefer an open-source and flexible environment for their research. |

The exploration of 3D-QSAR underscores its unique value in drug discovery: it translates abstract chemical structures into visual, three-dimensional maps that guide medicinal chemists toward more potent compounds. While 2D-QSAR remains a powerful tool for rapid property prediction, 3D-QSAR provides superior mechanistic insight during lead optimization by highlighting the spatial and electronic features critical for binding [12].

The future of 3D-QSAR is being shaped by integration with advanced artificial intelligence. Modern implementations are moving beyond traditional PLS, incorporating machine learning algorithms like XGBoost and CatBoost to handle molecular field data [15]. Furthermore, the drive for interpretability is being addressed by techniques like SHAP analysis, which identifies key descriptors and validates the relevance of selected variables, strengthening the model's reliability [15]. As these tools become more accessible and integrated into user-friendly web platforms and automated workflows, 3D-QSAR will continue to be a cornerstone of rational drug design.

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of modern computational chemistry and drug discovery, providing crucial mathematical frameworks that connect a molecule's chemical structure to its biological activity or physicochemical properties. At the heart of every QSAR model lie molecular descriptors—quantitative representations of structural features that enable this predictive capability. These descriptors are broadly categorized into four fundamental classes that form the foundation of structure-activity analysis: electronic, steric, hydrophobic, and quantum-chemical parameters.

The evolution of QSAR from its beginnings over 40 years ago has dramatically expanded both the breadth and depth of descriptor utilization. According to historical context found in the search results, traditional QSAR modeling was initially viewed strictly as an analytical physical chemical approach applicable only to small congeneric series of molecules [18]. The technique was first introduced by Hansch et al. based on implications from linear free-energy relationships and the Hammett equation, operating on the fundamental assumption that differences in physicochemical properties account for differences in biological activities of compounds [18]. These core structural properties remain essential to modern QSAR practices, though their calculation and application have grown increasingly sophisticated.

This guide examines these key descriptor categories within the broader research framework comparing 2D versus 3D QSAR approaches—two distinct paradigms that utilize molecular descriptors in fundamentally different ways to predict chemical behavior and biological activity. Understanding these descriptor categories and their implementation across QSAR methodologies provides researchers with critical insights for selecting appropriate modeling strategies in drug development and chemical property prediction.

Theoretical Foundations: The Four Key Descriptor Categories

Electronic Parameters

Electronic parameters quantify the distribution of electrons within a molecule, directly influencing intermolecular interactions, binding affinity, and chemical reactivity. These descriptors capture a molecule's ability to participate in electrostatic interactions, hydrogen bonding, and charge-transfer complexes—critical factors in drug-receptor recognition and binding.

The Hammett constant (σ) represents a classical electronic parameter that describes the electron-donating or withdrawing properties of substituents through their influence on ionization constants of benzoic acid derivatives [18]. Modern computational approaches have expanded this concept to include molecular dipole moments, atomic partial charges, and electrostatic potential maps derived from quantum mechanical calculations. In 3D-QSAR methods like CoMFA (Comparative Molecular Field Analysis), electronic properties are sampled using probe atoms to generate electrostatic potential fields around aligned molecules, providing rich data for activity prediction [6] [7].

Steric Parameters

Steric parameters describe the spatial occupancy and shape characteristics of molecules, encompassing size, volume, and topological dimensions that influence molecular fit into biological targets and accessibility to reaction sites. Steric effects can either facilitate or hinder biological activity through complementarity with receptor binding pockets.

Traditional steric descriptors include Verloop STERIMOL parameters [18] and Taft's steric constants, which quantify the spatial requirements of substituents. Contemporary 3D-QSAR approaches implement sophisticated steric field calculations using van der Waals probes and shape-based alignment algorithms [7]. The Cresset Field 3D-QSAR method, for instance, utilizes molecular field points from the XED force field to sample volume and shape characteristics for each molecule in the training set, generating descriptors that capture subtle steric influences on activity [7].

Hydrophobic Parameters

Hydrophobic parameters quantify the relative affinity of molecules or substituents for lipophilic versus aqueous environments, directly influencing transport properties, membrane permeability, and binding interactions driven by desolvation effects. Hydrophobicity represents a critical determinant in drug absorption, distribution, and bioavailability.

The octanol-water partition coefficient (Log P) and its pH-dependent counterpart Log D serve as the fundamental quantitative measures of hydrophobicity [18]. In classical QSAR, Hansch substituent hydrophobicity constants (π) parameterize the contribution of specific substituents to overall molecular lipophilicity [18]. Modern descriptor calculations incorporate these principles through computational methods that estimate partition coefficients and solvent-accessible surface areas, with tools like ACDlabs and Dragon software routinely generating these parameters for QSAR modeling [6] [11].

Quantum-Chemical Parameters

Quantum-chemical parameters derive from quantum mechanical calculations and describe electronic structure properties with higher theoretical rigor than classical electronic parameters. These descriptors provide insights into reactivity, stability, and intermolecular interaction capabilities that directly influence biological activity.

Key quantum-chemical descriptors include the energies of the highest occupied and lowest unoccupied molecular orbitals (HOMO and LUMO), which determine nucleophilicity and electrophilicity respectively; molecular orbital coefficients that predict reaction regioselectivity; and ionization potentials influencing electron-transfer capabilities [11]. Studies demonstrate these parameters can be calculated using semiempirical methods like AM1, with software packages such as Hyperchem enabling their computation for QSAR modeling [11]. The inclusion of quantum-chemical descriptors often enhances model interpretability by connecting observed activities to fundamental electronic structure principles.

Comparative Analysis: 2D vs. 3D QSAR Descriptor Implementation

Fundamental Methodological Differences

The distinction between 2D and 3D QSAR approaches fundamentally resides in how molecular structures are represented and compared. 2D-QSAR utilizes descriptors derived from molecular constitution and topology without explicit spatial reference, while 3D-QSAR incorporates the three-dimensional arrangement of atoms and functional groups, requiring molecular alignment to a common framework [6].

2D-QSAR methods employ numerical descriptors that can be calculated directly from molecular connection tables or 2D structural representations. These include constitutional descriptors (atom and bond counts, molecular weight), topological indices (connectivity indices, path counts), and electronic parameters calculated without spatial coordinates [11]. The relative computational simplicity of 2D descriptors enables rapid screening of large compound libraries without conformational analysis or alignment requirements.

3D-QSAR approaches extend this paradigm by incorporating spatial molecular fields and properties sampled in three dimensions. Methods such as CoMFA (Comparative Molecular Field Analysis), HASL (Hypothetical Active Site Lattice), and Field 3D-QSAR utilize probe atoms to map electrostatic and steric properties onto grid points surrounding aligned molecules [6] [7]. This generates significantly larger descriptor sets that capture shape complementarity and spatial property distributions relevant to biological recognition.

Performance Comparison: Predictive Accuracy and Applications

Direct comparative studies provide valuable insights into the relative performance of 2D and 3D QSAR methodologies across different applications. A 2012 study comparing Multiple Linear Regression (MLR), Artificial Neural Networks (ANN), and HASL 3D-QSAR for predicting histamine H3 receptor antagonist activity found that 2D methods (MLR and ANN) outperformed the 3D HASL approach in prediction accuracy [6]. The calculated values for the mean absolute percentage error (MAPE) ranged from 2.9 to 3.6 for both MLR and ANN methods, while results from 3D-QSAR studies using HASL were not as robust [6].

Conversely, a 2025 study on pyrazole corrosion inhibitors demonstrated strong predictive ability for both 2D and 3D descriptors using XGBoost machine learning models, with the XGBoost model demonstrating R² = 0.96 for the 2D training set and R² = 0.94 for the 3D training set [15]. The test set performance remained solid with R² = 0.75 for 2D descriptors and R² = 0.85 for 3D descriptors, suggesting contextual dependence of method efficacy [15].

Recent SARS-CoV-2 Mpro inhibitor studies using Cresset's Flare software showed comparable predictive performance between 2D and 3D approaches, with both methods generating models with test set r² values around 0.72 [7]. However, the 3D-QSAR approach provided additional interpretability through visualization of regions where the model predicts strong effects on activity, highlighting the value of 3D methods beyond pure predictive accuracy [7].

Table 1: Performance Comparison of 2D vs. 3D QSAR Approaches in Recent Studies

| Study Focus | 2D Method Performance | 3D Method Performance | Best Performing Algorithm | Key Findings |

|---|---|---|---|---|

| Pyrazole corrosion inhibitors [15] | Training: R² = 0.96, Test: R² = 0.75 | Training: R² = 0.94, Test: R² = 0.85 | XGBoost | 3D descriptors showed superior test set performance |

| SARS-CoV-2 Mpro inhibitors [7] | Test set r² = 0.72 (MLP with Morgan fingerprints) | Test set r² = 0.72 (MLP 3D-QSAR) | Multiple (comparable performance) | Both methods showed comparable predictive accuracy |

| Histamine H3 receptor antagonists [6] | MAPE: 2.9-3.6, SDEP: 0.31-0.36 | Inferior to 2D methods | MLR and ANN | Traditional 2D methods outperformed 3D HASL approach |

Mechanistic Interpretability and Visualization Capabilities

A critical distinction between 2D and 3D QSAR approaches lies in their capacity for mechanistic interpretation and structural insight. While 2D-QSAR models often provide excellent predictive capability, they typically function as "black boxes" with limited direct translation to structural modifications [6].

3D-QSAR methods excel in interpretability, generating visual representations of molecular regions where specific property enhancements would improve activity. For example, the Cresset Field 3D-QSAR method illustrates electrostatic and steric model coefficients superimposed on molecular structures, identifying favorable and unfavorable regions for modification [7]. In SARS-CoV-2 Mpro inhibitor studies, this approach identified specific molecular regions where less positive charge would improve activity and highlighted the 2-chlorobenzyl moiety as the optimal region for steric modification [7].

This spatial interpretability provides medicinal chemists with direct structural insights for molecular optimization—a significant advantage over the often-opaque correlation equations generated by 2D-QSAR models. The 3D-QSAR contour maps effectively serve as visual guides for rational drug design, indicating where specific steric or electronic modifications would likely enhance biological activity.

Experimental Protocols and Methodologies

Standard 2D-QSAR Development Workflow

The development of robust 2D-QSAR models follows a systematic protocol encompassing data collection, descriptor calculation, feature selection, model building, and validation [19] [20].

Step 1: Data Set Curation and Preparation

- Collect biological activity data (e.g., IC₅₀, Ki, EC₅₀) for a congeneric series of compounds

- Apply inclusion criteria based on data quality and structural diversity

- Divide compounds into training and test sets using activity stratification to ensure representative distribution [7]

Step 2: Molecular Descriptor Calculation

- Generate 2D structures and optimize using molecular mechanics (MM+ force field) or semiempirical methods (AM1) [11]

- Calculate descriptors using software packages such as Dragon, Hyperchem, or ACDlabs [11]

- Compute diverse descriptor types including constitutional, topological, geometrical, and quantum-chemical parameters [11]

Step 3: Descriptor Selection and Reduction

- Apply feature selection algorithms like Genetic Algorithm-Partial Least Squares (GA-PLS) to identify most relevant descriptors [11]

- Remove highly correlated descriptors (e.g., correlation threshold >0.95) to reduce multicollinearity [11]

- Retain approximately 10% of initially calculated descriptors for model building [11]

Step 4: Model Development and Validation

- Implement multiple algorithms: Multiple Linear Regression (MLR), Partial Least Squares (PLS), Artificial Neural Networks (ANN), Support Vector Machines (SVM), Random Forest (RF), or Deep Neural Networks (DNN) [6] [21]

- Perform internal validation using leave-one-out or leave-many-out cross-validation [20]

- Conduct external validation using the predetermined test set [19] [20]

- Apply stringent validation criteria including Golbraikh and Tropsha parameters, concordance correlation coefficient (CCC), and residual analysis [20]

Standard 3D-QSAR Development Workflow

3D-QSAR methodologies incorporate additional steps for molecular alignment and spatial field calculation, introducing complexity but enhancing interpretability [7].

Step 1: Molecular Modeling and Conformation Generation

- Build 3D molecular structures from 2D representations

- Perform conformational search within a specified energy window (typically 2.5 kcal/mol) [7]

- Select biologically relevant conformations based on experimental data or computational docking

Step 2: Molecular Alignment

- Align molecules to a common reference framework using maximum common substructure (MCS) algorithms [7]

- Utilize crystallographic ligand coordinates from protein-ligand complexes when available [7]

- Apply alternative alignment rules to assess model sensitivity to alignment method

Step 3: Molecular Field Calculation

- Sample steric and electrostatic fields using probe atoms on 3D grid points surrounding aligned molecules

- Implement standard probes: sp³ carbon atom for steric fields and H⁺ ion for electrostatic fields [7]

- Calculate interaction energies using appropriate force fields (e.g., Cresset XED force field) [7]

Step 4: Model Building and Validation

- Apply Partial Least Squares (PLS) regression to correlate field values with biological activity

- Implement machine learning approaches including k-Nearest Neighbors (kNN), SVM, Gaussian Process Regression (GPR), Random Forest (RF), and Multilayer Perceptron (MLP) for 3D-QSAR [7]

- Perform cross-validation and external validation using the same rigorous standards as 2D-QSAR

- Generate 3D coefficient contour maps for visual interpretation of results [7]

Machine Learning Integration in Modern QSAR

Contemporary QSAR practices increasingly incorporate advanced machine learning algorithms that transcend the traditional 2D/3D dichotomy. Studies demonstrate that methods like Deep Neural Networks (DNN) and Random Forest (RF) can achieve superior predictive performance compared to traditional regression-based QSAR approaches [21].

In comparative studies using the same dataset and molecular descriptors, machine learning methods (DNN and RF) exhibited higher prediction accuracy (r² ~0.90) than traditional QSAR methods (PLS and MLR with r² ~0.65) when sufficient training data was available [21]. Notably, with smaller training sets, DNN maintained higher predictive performance (r² = 0.94) compared to RF (r² = 0.84), while traditional methods showed significant performance degradation [21].

Table 2: Performance of Computational Algorithms in QSAR Modeling

| Algorithm Type | Representative Methods | Strengths | Limitations | Ideal Use Cases |

|---|---|---|---|---|

| Traditional Regression | MLR, PLS | Interpretable models, minimal computational requirements | Limited complexity handling, prone to overfitting with many descriptors | Small congeneric series, preliminary analysis |

| Machine Learning | RF, SVM, XGBoost | Handles complex nonlinear relationships, robust with large descriptor sets | "Black box" nature, extensive parameter tuning required | Diverse compound sets, large descriptor spaces |

| Deep Learning | DNN, ANN | Automatic feature detection, excels with large datasets | Extensive data requirements, computational intensity | Very large datasets, complex structure-activity relationships |

| 3D-QSAR Specific | Field 3D-QSAR, CoMFA, HASL | High interpretability, spatial visualization | Alignment sensitivity, computational expense | Lead optimization, structure-based design |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful QSAR modeling requires specialized software tools and computational resources for descriptor calculation, model development, and validation. The following table summarizes key solutions used in contemporary QSAR research, as identified from experimental protocols in the search results.

Table 3: Essential Computational Tools for QSAR Research

| Tool Category | Specific Software/Platform | Primary Function | Key Features | Applicable QSAR Type |

|---|---|---|---|---|

| Molecular Modeling | Hyperchem [11] | 3D structure generation and optimization | MM+ force field, AM1 semiempirical calculations | Both 2D and 3D QSAR |

| Descriptor Calculation | Dragon [11] | Comprehensive descriptor calculation | 1481+ 1D, 2D, and 3D molecular descriptors | Primarily 2D-QSAR |

| Descriptor Calculation | ACDlabs [11] | Physicochemical property prediction | Log D, pKa, molar volume, parachor | Primarily 2D-QSAR |

| 3D-QSAR Specific | Cresset Flare [7] | 3D-QSAR model development | Field 3D-QSAR, XED force field, molecular alignment | Primarily 3D-QSAR |

| 3D-QSAR Specific | HASL [6] | 3D-QSAR using lattice approach | Hypothetical Active Site Lattice generation | Primarily 3D-QSAR |

| Cheminformatics | RDKit [7] | Open-source cheminformatics | Molecular fingerprints, descriptor calculation | Both 2D and 3D QSAR |

| Machine Learning | Scikit-learn, TensorFlow [21] | Advanced model development | DNN, RF, SVM, and other ML algorithms | Both 2D and 3D QSAR |

| Validation Tools | Various statistical packages [20] | Model validation and assessment | External validation parameters, applicability domain | Both 2D and 3D QSAR |

The comparative analysis of electronic, steric, hydrophobic, and quantum-chemical parameters across 2D and 3D QSAR methodologies reveals a nuanced landscape where each approach offers distinct advantages depending on research objectives. 2D-QSAR provides computational efficiency, straightforward implementation, and strong predictive performance for congeneric series, making it ideal for high-throughput screening and preliminary SAR analysis. Conversely, 3D-QSAR delivers superior mechanistic interpretability through spatial visualization of molecular regions critical for activity, offering invaluable guidance for lead optimization in advanced drug discovery stages.

Contemporary research trends indicate a paradigm shift toward hybrid approaches that leverage the strengths of both methodologies. Machine learning algorithms increasingly bridge the gap between descriptor types, handling complex relationships in high-dimensional data spaces while providing robust predictive models [21]. The critical importance of rigorous validation—particularly external validation with compounds outside the training set—cannot be overstated for both approaches, as this remains the ultimate measure of model utility and reliability [19] [20].

Strategic selection between 2D and 3D QSAR approaches should be guided by specific research context: available computational resources, dataset characteristics, project timeline, and ultimately, whether the primary need is quantitative prediction or structural insight for molecular design. As computational power increases and algorithms evolve, the integration of these complementary approaches will continue to enhance our ability to translate chemical structure into predictive understanding of biological activity.

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of computational medicinal chemistry, providing critical frameworks for predicting biologically relevant properties of chemical compounds. The theoretical foundations of modern QSAR rest upon three pivotal approaches: the Hammett equation, which introduced quantitative electronic parameters; the Hansch-Fujita method, which incorporated hydrophobic and steric effects; and the Free-Wilson model, which established the concept of group additivity. These classical methodologies form the essential groundwork upon which contemporary 2D and 3D-QSAR techniques have been built [22] [23]. Understanding these foundational approaches is prerequisite to meaningful comparison of modern 2D versus 3D-QSAR strategies in current drug discovery pipelines.

The historical development of QSAR began with observations by Meyer, Overton, and Ferguson regarding correlations between lipophilicity and biological activity [22] [23]. The field formally emerged in the early 1960s through independent work by Hansch and Fujita and by Free and Wilson, building upon Hammett's earlier linear free-energy relationships for chemical reactivity [18] [23]. These approaches established the principle that molecular properties could be quantitatively correlated with biological response, enabling predictive modeling in drug design.

Theoretical Foundations and Mathematical Formulations

Hammett Electronic Constants

The Hammett equation, developed by Louis Hammett in the 1930s, represents one of the earliest applications of linear free-energy relationships (LFER). It quantitatively describes how electronic effects of substituents influence reaction rates or equilibrium constants for organic reactions [23].

Mathematical Formulation:

Where:

- K = Rate or equilibrium constant for substituted compound

- K₀ = Rate or equilibrium constant for unsubstituted compound

- ρ = Reaction constant (sensitivity to substituent effects)

- σ = Substituent constant (electron-donating/withdrawing ability)

Hammett constants (σ) provide quantitative descriptors of substituent electronic properties, with positive values indicating electron-withdrawing groups and negative values indicating electron-donating groups [18] [23]. While originally developed for chemical reactivity, these principles formed the conceptual basis for extending quantitative relationships to biological systems.

Hansch-Fujita Analysis

Hansch and Fujita revolutionized QSAR by extending Hammett's electronic parameters to include hydrophobic and steric effects, creating a multiparameter approach that could model complex biological interactions [24] [22] [23]. This approach correlates biological activity with various physicochemical properties through multiple regression analysis.

Linear Hansch Equation:

Nonlinear Hansch Equation (accounting for parabolic lipophilicity):

Where:

- C = Molar concentration producing biological effect

- log P = Lipophilicity parameter (octanol-water partition coefficient)

- σ = Hammett electronic constant

- Eₛ = Taft steric parameter

- a, b, c, d = Coefficients determined by regression analysis

The Hansch approach operates on the principle that biological activity depends on a combination of transport properties (governed largely by lipophilicity) and interactions with the target site (influenced by electronic and steric properties) [24] [22].

Free-Wilson Analysis

The Free-Wilson model, developed concurrently with the Hansch approach, provides a mathematically distinct methodology based on the concept of group contribution additivity [24] [22].

Mathematical Formulation:

Where:

- BA = Biological activity

- μ = Overall average biological activity (unsubstituted parent compound)

- aᵢⱼ = Contribution of substituent j at position i

- Σ(aᵢⱼ) = Sum of all substituent contributions

The model uses presence/absence of specific substituents at defined molecular positions as descriptor variables, which are correlated with biological activity using multiple linear regression [24] [22]. Each substituent's contribution is assumed to be constant and independent of other substituents in the molecule.

Mixed Approach

Recognizing the complementary strengths of Hansch and Free-Wilson methods, Kubinyi developed a mixed approach that integrates both methodologies [24] [22].

Mathematical Formulation:

Where:

- Σ(aᵢⱼ) = Free-Wilson component (group contributions)

- Σ(kᵢφⱼ) = Hansch component (physicochemical properties)

- k = Constant term

This hybrid approach leverages the group contribution basis of Free-Wilson analysis while incorporating the mechanistic insights provided by physicochemical parameters from the Hansch approach [24] [22].

Table 1: Comparative Analysis of Classical QSAR Approaches

| Feature | Hammett Equation | Hansch-Fujita Analysis | Free-Wilson Analysis |

|---|---|---|---|

| Fundamental Basis | Linear Free-Energy Relationships | Multiparameter Extrathermodynamic Approach | Group Contribution Additivity |

| Key Parameters | σ (electronic) | log P (lipophilic), σ (electronic), Eₛ (steric) | Indicator variables for substituents |

| Mathematical Form | Linear: log(K/K₀) = ρσ | Linear/Nonlinear regression | Multiple linear regression with indicator variables |

| Primary Application | Chemical reactivity | Complex biological systems | Congeneric series with multiple substitution sites |

| Key Assumptions | Electronic effects dominate; substituent effects are additive and constant | Biological activity depends on transport and interaction properties | Group contributions are constant and position-specific |

| Historical Context | 1930s (earliest QSAR concept) | Early 1960s (foundational biological QSAR) | Early 1960s (parallel development to Hansch) |

Comparative Experimental Validation: Classical and Modern QSAR

Case Study: Histamine H₃ Receptor Antagonists

A comprehensive comparison of QSAR methodologies was performed using 58 arylbenzofuran-derived histamine H₃ receptor antagonists [6]. This study directly compared multiple linear regression (MLR, representing classical Hansch-type approaches), artificial neural networks (ANN, representing modern machine learning), and HASL (a 3D-QSAR method).

Experimental Protocol:

- Dataset: 58 compounds with measured receptor binding affinities

- Descriptor Calculation: Molecular descriptors including E_HOMO (electronic), LogD (lipophilic), Mor19v, Mor30m, Mor18u (3D-MORSE), MAXDP, and PSA

- Variable Selection: Genetic algorithm coupled partial least square and stepwise multiple regression

- Validation: Leave-group-out cross-validation technique

- Performance Metrics: Mean absolute percentage error (MAPE), standard deviation of error of prediction (SDEP)

Results: The calculated MAPE values ranged from 2.9 to 3.6, and SDEP values ranged from 0.31 to 0.36 for both MLR and ANN methods, indicating statistically comparable performance. The 3D-QSAR HASL method performed less effectively than the 2D approaches in this specific application [6]. This demonstrates that traditional Hansch-type approaches (implemented via MLR) can perform equally well compared to more advanced computational methods for certain congeneric series.

Case Study: SARS-CoV-2 Mᴾʳᵒ Inhibitors

A recent study on SARS-CoV-2 main protease inhibitors provides insight into the comparative performance of classical and modern QSAR approaches [7].

Experimental Protocol:

- Dataset: 76 non-covalent inhibitors with different chemotypes and evenly distributed activity (pIC₅₀: 4.00-7.74)

- Data Partitioning: 56 molecules training set, 20 molecules test set (26% activity stratification)

- 2D-QSAR Descriptors: 6 physicochemical descriptors (MW, TPSA, #RB, NumHAcceptors, NumHDonors, RingCount) and fingerprints (RDKit, Morgan, MACCS keys)

- Modeling Methods: Support Vector Machine, Gaussian Process Regression, Random Forest, Multilayer Perceptron

- Validation: Training set r², cross-validated q², test set r²

Results: The best performing 2D-QSAR model (MLP with Morgan fingerprints) achieved r² training = 1.00, q² CV = 0.80, and r² test = 0.72, while the best 3D-QSAR model (MLP) achieved r² training = 1.00, q² CV = 0.82, and r² test = 0.72 [7]. This demonstrates comparable predictive ability between well-constructed 2D and 3D models, with the classical physicochemical descriptors providing robust performance.

Table 2: Performance Comparison of QSAR Methodologies Across Studies

| Study/Application | Methodology | Statistical Performance | Relative Advantages |

|---|---|---|---|

| Histamine H₃ Receptor Antagonists [6] | MLR (Hansch-type) | MAPE: 2.9-3.6; SDEP: 0.31-0.36 | Simplicity, interpretability, equal performance to advanced methods |

| ANN | MAPE: 2.9-3.6; SDEP: 0.31-0.36 | Handling nonlinear relationships | |

| 3D-QSAR (HASL) | Inferior to 2D methods | - | |

| SARS-CoV-2 Mᴾʳᵒ Inhibitors [7] | 2D-QSAR (MLP) | r² test = 0.72 | Fast calculation, no alignment needed |

| 3D-QSAR (Field) | r² test = 0.71 | Spatial interpretation, field visualization | |

| Bioactive Conformations [25] | 2D Descriptors | Variable performance | Computational efficiency |

| 3D Descriptors | Variable performance | Structural specificity | |

| 2D+3D Combined | Superior to either alone | Complementary information |

Methodological Workflows and Applications

Workflow: Classical Hansch Analysis

The standard methodology for implementing Hansch analysis follows a systematic protocol [24] [22] [23]:

- Compound Selection: Assemble a congeneric series with measured biological activities

- Descriptor Calculation:

- Calculate log P values (experimentally or computationally)

- Compile Hammett σ constants from literature

- Obtain steric parameters (Eₛ, molar refractivity)

- Model Development:

- Perform multiple linear regression analysis

- Test both linear and parabolic lipophilicity relationships

- Include interaction terms if theoretically justified

- Model Validation:

- Statistical significance testing (F-test, t-test)

- Cross-validation (leave-one-out, leave-group-out)

- External validation with test set compounds

- Model Interpretation:

- Analyze coefficient magnitudes and signs

- Relate to mechanistic understanding of transport and binding

- Generate predictions for new analogs

Workflow: Free-Wilson Analysis

The Fujita-Ban variant of Free-Wilson analysis follows this experimental protocol [24] [22]:

- Data Matrix Preparation: Create a matrix with rows representing compounds and columns representing presence/absence of specific substituents at defined positions

- Reference Compound Selection: Choose an appropriate reference structure (often the unsubstituted parent)

- Regression Analysis: Perform multiple linear regression using indicator variables (0/1) as independent variables and log(BA) as dependent variable

- Statistical Validation: Evaluate model significance and contribution of individual substituents

- Predictive Application: Use group contributions to predict activities of untested substitution patterns within the defined chemical space

Applications and Limitations

Hansch Analysis Applications:

- Enzyme inhibition studies (e.g., dihydrofolate reductase inhibitors) [24]

- Receptor binding affinity prediction (β-adrenergic, benzodiazepine, dopamine receptors) [24]

- Pharmacokinetic parameter modeling (absorption, distribution) [24]

- Multidrug resistance reversal agent design [24]

Free-Wilson Analysis Applications:

- Analgesic benzomorphans and morphinans [24]

- Antibacterial phenol and chlorophenol activities [24]

- Dihydrofolate reductase inhibition by 2,4-diaminopyrimidines [24]

Key Limitations:

- Hansch Analysis: Requires accurate physicochemical parameters; assumes linear free-energy relationships; limited to congeneric series [22]

- Free-Wilson Analysis: Cannot predict substituents not included in original analysis; requires many compounds for statistical significance; assumes strict additivity [24] [22]

Visualization of QSAR Method Relationships

Table 3: Essential Computational Tools for QSAR Research

| Tool Category | Specific Tools/Software | Key Functionality | Application in Foundational QSAR |

|---|---|---|---|

| Descriptor Calculation | RDKit [7], Dragon | Calculation of molecular descriptors | Generation of physicochemical parameters (log P, σ, Eₛ equivalents) |

| Statistical Analysis | Various ML packages [7] [26] | Multiple linear regression, machine learning | Model development for Hansch and Free-Wilson analyses |

| 3D-QSAR Platforms | Flare [7], CoMFA | Field-based alignment and analysis | Comparative methodology for validation studies |

| Validation Tools | Cross-validation algorithms [6] [7] | Model performance assessment | Statistical validation of classical QSAR models |

| Data Mining | ChEMBL [26], PDB [25] | Bioactivity and structural data | Compound series selection and bioactive conformation analysis |

The theoretical foundations established by Hammett, Hansch-Fujita, and Free-Wilson approaches continue to inform modern QSAR research, including contemporary comparisons between 2D and 3D methodologies. The classical principles of LFER, multiparameter optimization, and group additivity remain conceptually relevant in current chemoinformatic approaches [18] [23].

Recent comparative studies demonstrate that the predictive performance of classical 2D approaches often equals or surpasses more computationally intensive 3D methods for congeneric series [6] [7] [26]. However, the integration of classical principles with modern 3D structural information represents the most promising direction, as evidenced by studies showing superior performance when combining 2D and 3D descriptors [25].

The enduring relevance of these foundational approaches lies in their rigorous mathematical framework, interpretability, and demonstrated success in drug discovery programs. As QSAR continues to evolve with advances in machine learning and structural biology, the theoretical foundations established by these pioneering methodologies provide essential principles for meaningful model interpretation and application in therapeutic development.

In modern computational chemistry and drug design, the principle that structurally similar molecules exhibit similar properties or activities is foundational. The quantitative structure-activity relationship (QSAR) paradigm formalizes this principle into predictive models. A critical divergence in QSAR methodology lies in how "structure" is represented: either as two-dimensional (2D) molecular graphs or three-dimensional (3D) spatial constructs. 2D representations reduce a molecule to a set of numerical descriptors derived from its connectivity and atomic composition, independent of its shape or conformation. In contrast, 3D representations explicitly incorporate the spatial orientation of atoms and molecules, capturing steric, electrostatic, and other field-based properties that dictate molecular interactions [27]. This guide provides an objective comparison of how these two approaches capture chemical space, supported by experimental data and detailed methodologies to inform selection for specific research applications.

Core Conceptual Differences: A Theoretical Framework

The fundamental distinction between 2D and 3D methods originates from their treatment of molecular geometry.

- 2D-QSAR (Dimensionality-Invariant Descriptors): This approach uses descriptors that are invariant to the molecule's conformation or rotation in space. These are often "summary" descriptors, such as molecular weight (MW), partition coefficient (LogP), topological polar surface area (TPSA), counts of rotatable bonds (#RB), hydrogen bond donors/acceptors, and molecular fingerprints that encode substructures [7] [27]. The molecular structure is treated as a graph, and the resulting descriptors do not change with the molecule's 3D orientation.

- 3D-QSAR (Spatially-Dependent Descriptors): This approach requires a three-dimensional molecular structure and generates descriptors that are intimately tied to its shape and interaction fields in space. The process typically involves placing each molecule within a 3D grid and using probe atoms to measure steric (van der Waals) and electrostatic (Coulombic) interaction energies at thousands of grid points. Methods like Comparative Molecular Field Analysis (CoMFA) and Comparative Molecular Similarity Indices Analysis (CoMSIA) are classic examples [8] [27]. The quality of these descriptors is highly sensitive to the conformational geometry and, crucially, the correct alignment of all molecules in the dataset relative to a common 3D frame of reference [27].

The diagram below illustrates the fundamental workflow difference between these two approaches.

Performance Comparison: Quantitative Experimental Data

Direct comparisons in published studies reveal that the performance of 2D vs. 3D methods is highly context-dependent, with both demonstrating strengths in different scenarios.

Table 1: Performance Comparison of 2D- and 3D-QSAR Models on SARS-CoV-2 Mpro Inhibitors [7]

| QSAR Type | Regression Model | r² Training Set | q² Training Set (CV) | r² Test Set |

|---|---|---|---|---|

| 2D-QSAR (Descriptors & Fingerprints) | MLP (Morgan FP) | 1.00 | 0.80 | 0.72 |

| 2D-QSAR (Descriptors & Fingerprints) | SVM (MACCS keys) | 0.96 | 0.80 | 0.50 |

| 3D-QSAR | MLP | 1.00 | 0.82 | 0.72 |

| 3D-QSAR | Field QSAR | 0.96 | 0.81 | 0.71 |

Table 2: Performance of Machine Learning Models using 2D and 3D Descriptors for Pyrazole Corrosion Inhibitors [15]

| Model | Descriptor Type | Training Set R² | Test Set R² |

|---|---|---|---|

| XGBoost | 2D | 0.96 | 0.75 |

| XGBoost | 3D | 0.94 | 0.85 |

| SVR | 2D | 0.93 | 0.67 |

| SVR | 3D | 0.91 | 0.64 |

Table 3: Comparison of QSAR Methods for Predicting H3 Receptor Antagonist Binding Affinities [6]

| QSAR Method | Description | Statistical Performance (MAPE) |

|---|---|---|

| Multiple Linear Regression (MLR) | 2D Method | 2.9 - 3.6 |

| Artificial Neural Network (ANN) | 2D Method | 2.9 - 3.6 |

| HASL | 3D Method | Not as good as 2D methods |

Insights from Comparative Data

- Comparable Predictive Power: In the SARS-CoV-2 Mpro study, the best 2D and 3D models achieved an identical test set ( r^2 ) of 0.72, indicating that for this dataset, both approaches can yield models with equivalent predictive accuracy [7]. This finding is critical as it suggests that simpler 2D methods can sometimes match the predictive performance of more computationally intensive 3D methods.

- Advantage of 3D Descriptors with Advanced ML: In the corrosion inhibitor study, the XGBoost model using 3D descriptors achieved a superior test set ( R^2 ) (0.85) compared to its 2D counterpart (0.75). This highlights that for certain endpoints, 3D structural information can enhance model generalizability when leveraged by powerful machine learning algorithms [15].

- Simplicity Can Be Sufficient: The H3 receptor antagonist study concluded that simple traditional 2D methods like Multiple Linear Regression (MLR) can be as reliable as more advanced and sophisticated methods like ANN and 3D-QSAR analyses for predicting binding affinities [6].

Experimental Protocols: Methodologies for Model Construction

To ensure reproducibility and informed method selection, the following sections detail the standard protocols for building 2D- and 3D-QSAR models.

Protocol for 2D-QSAR Model Development

1. Dataset Curation: A set of molecules with experimentally determined biological activities (e.g., IC₅₀, Ki) is assembled. The activity is typically converted to a logarithmic scale (pIC₅₀ = -logIC₅₀) to ensure a normal distribution [28]. The dataset is then divided into training and test sets, often using activity stratification.

2. Molecular Descriptor Calculation: 2D molecular structures (e.g., in SMILES format) are used as input to software like Dragon [28] or RDKit [7] to calculate thousands of molecular descriptors. These can include physicochemical properties (MW, LogP, TPSA), topological indices, and fingerprint bits (e.g., RDKit, Morgan, MACCS keys) [7].

3. Data Preprocessing and Variable Selection: The initial descriptor matrix is reduced by removing constant, near-constant, and highly correlated descriptors. Feature selection algorithms like Genetic Algorithm-Partial Least Squares (GA-PLS) or Ordered Predictors Selection (OPS) are then employed to select a subset of descriptors most relevant to the biological activity [6] [28].

4. Model Building and Validation: Machine learning methods such as Support Vector Machine (SVM), Random Forest (RF), Multilayer Perceptron (MLP), or Gaussian Process Regression (GPR) are applied to the training set [15] [7]. Model performance is rigorously assessed using internal cross-validation (e.g., leave-one-out, yielding ( q^2 )) on the training set and external validation using the held-out test set (yielding ( r^2 ) test) [7].

Protocol for 3D-QSAR Model Development

1. Data Collection and 3D Structure Generation: Similar to 2D-QSAR, a dataset of compounds with known activity is compiled. 2D structures are converted into 3D models using tools like RDKit or Sybyl, followed by geometry optimization using molecular mechanics (e.g., MM+ force field) or quantum mechanical methods (e.g., AM1) to obtain low-energy conformations [27].

2. Molecular Alignment: This is a critical and often challenging step. All molecules must be superimposed in a common 3D space based on a presumed bioactive conformation. Alignment can be guided by a maximum common substructure (MCS) [7], a common scaffold (e.g., Bemis-Murcko scaffold) [27], or a known active compound used as a template.

3. Field Descriptor Calculation: The aligned molecules are placed within a 3D grid. A probe atom (e.g., an sp³ carbon with a +1 charge) is used to calculate steric (Lennard-Jones) and electrostatic (Coulombic) interaction energies at each grid point. This is the core of the CoMFA method. The CoMSIA method extends this by calculating similarity indices for steric, electrostatic, hydrophobic, and hydrogen bond donor/acceptor fields, often providing smoother and more interpretable results [8] [27].

4. Model Building, Validation, and Interpretation: Partial Least Squares (PLS) regression is the standard technique to correlate the thousands of field descriptors with biological activity [27]. The model is validated via cross-validation. A key output is 3D contour maps, which visually indicate regions where specific molecular properties (e.g., steric bulk, electronegativity) favorably or unfavorably influence activity, providing direct design insights [7] [27].

The Scientist's Toolkit: Essential Research Reagents and Software

Table 4: Key Software and Tools for QSAR Modeling

| Tool Name | Type | Primary Function in QSAR |

|---|---|---|

| Dragon [28] | Software | Calculates a vast array of >1,481 0D, 1D, 2D, and 3D molecular descriptors. |

| RDKit [7] | Open-Source Cheminformatics Library | Used for 2D descriptor and fingerprint calculation, 3D conformation generation, and maximum common substructure (MCS) alignment. |

| Gaussian 09 [8] | Software | Performs quantum mechanical calculations to optimize 3D geometries and compute electronic properties (e.g., dipole moment, HOMO/LUMO energies) for 2D-QSAR descriptors. |

| Flare/Cresset FieldQSAR [7] | Software | Implements 3D-QSAR methods using field points from the XED force field as descriptors, providing advanced visualization of model coefficients. |

| SYBYL [27] | Software Suite | A traditional computational chemistry platform containing implementations of classic 3D-QSAR methods like CoMFA and CoMSIA. |

The choice between 2D and 3D QSAR is not about one being universally superior to the other, but about selecting the right tool for the specific research question and constraints.

- Choose 2D-QSAR when: Your priority is speed and simplicity. It is excellent for high-throughput virtual screening of large chemical libraries, when the bioactive conformation is unknown, or when you need a rapid, robust predictive model without the complexities of alignment. The experimental data shows it can often match or even surpass the predictive accuracy of 3D methods [6] [7].

- Choose 3D-QSAR when: Your primary goal is mechanistic interpretation and rational design. It is indispensable when you need visual, spatial guidance on where and how to modify a molecule (e.g., "add a bulky group here," "make this region more electronegative") [7] [27]. It is most effective when a reliable alignment hypothesis is available and when the activity is strongly influenced by steric and electrostatic complementarity with a target.

In practice, a hybrid or consensus approach is often the most powerful strategy. Leveraging 2D methods for fast filtering and 3D methods for detailed lead optimization combines the strengths of both worlds, providing a comprehensive toolkit for navigating chemical space in modern drug discovery and materials science.

Implementation Strategies: When and How to Apply Each QSAR Approach

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of computational drug design, providing mathematical frameworks that correlate chemical structure with biological activity [23]. Among the diverse QSAR methodologies, classical 2D approaches utilizing Multiple Linear Regression (MLR) and Partial Least Squares (PLS) remain fundamentally important despite the emergence of more complex machine learning and 3D techniques. These methods establish quantitative relationships using molecular descriptors derived from two-dimensional structural representations, offering interpretability, computational efficiency, and proven predictive capability across diverse pharmaceutical applications [6] [11].

The foundational principle of 2D-QSAR rests on the paradigm that molecular structure encodes determinants of biological activity. MLR and PLS serve as the statistical engines that decode these relationships, transforming structural information into predictive models that guide lead optimization in drug discovery campaigns [29]. This guide provides a comprehensive technical comparison of these classical techniques, examining their theoretical bases, implementation protocols, performance characteristics, and appropriate applications within modern computational chemistry workflows.

Theoretical Foundations and Algorithmic Mechanisms

Multiple Linear Regression (MLR) in QSAR

Multiple Linear Regression operates on the principle of establishing a direct linear relationship between multiple independent variables (molecular descriptors) and a dependent variable (biological activity). The general form of an MLR equation in QSAR is expressed as:

BA = β₀ + β₁D₁ + β₂D₂ + ... + βₙDₙ + ε

Where BA represents the biological activity, β₀ is the regression constant, β₁...βₙ are regression coefficients for descriptors D₁...Dₙ, and ε denotes the error term [30]. The method requires careful descriptor selection to avoid overfitting, particularly because MLR assumes descriptor independence and lacks inherent mechanisms for handling correlated variables [6].

MLR's primary strength lies in its model interpretability; each coefficient quantitatively indicates how a unit change in a specific descriptor influences biological activity. For instance, in a QSAR study of glycogen synthase kinase-3β (GSK-3β) inhibitors, MLR yielded a highly interpretable model with standard parameters (S-value = 0.37, F-value = 37.17, r² = 0.855) that clearly indicated the contribution of specific descriptors like Verloop L and Lipole Z components [29]. However, this interpretability comes with the strict requirement of descriptor orthogonality, making MLR particularly sensitive to multicollinearity among independent variables.

Partial Least Squares (PLS) in QSAR

Partial Least Squares regression addresses the multicollinearity limitation of MLR by projecting the original descriptors into a new set of orthogonal components called latent variables. These components maximize the covariance between the descriptor matrix (X) and the activity vector (Y) [31]. The PLS algorithm essentially performs simultaneous dimensionality reduction and regression, making it particularly suited for datasets with numerous, potentially correlated descriptors.

The mathematical formulation of PLS involves decomposing both the X and Y matrices to extract latent structures:

X = TPᵀ + E Y = UQᵀ + F

Where T and U are score matrices, P and Q are loading matrices, and E and F represent residual matrices [31]. The regression is then performed using these latent variables rather than the original descriptors, effectively handling situations where the number of descriptors exceeds the number of compounds or when significant intercorrelation exists among descriptors.

PLS has demonstrated particular utility in advanced QSAR implementations such as image-based descriptor analysis. In a study of pleuromutilin derivatives, PLS successfully handled pixel-based descriptors extracted from molecular images, achieving impressive predictive performance (Q² = 0.9495, R² = 0.9586) for antibacterial activity prediction [31]. This capability to extract meaningful patterns from high-dimensional descriptor spaces makes PLS a robust choice for complex QSAR problems.

Experimental Protocols and Implementation

Standardized QSAR Workflow

The development of reliable MLR and PLS QSAR models follows a systematic workflow encompassing data preparation, descriptor calculation, model construction, and validation. Adherence to standardized protocols ensures model robustness and predictive reliability, with particular attention to validation techniques that guard against overfitting and chance correlations.

Detailed Methodological Procedures

Data Preparation and Descriptor Calculation

The initial phase involves curating a structurally diverse dataset of compounds with experimentally determined biological activities (typically IC₅₀, EC₅₀, or Kᵢ values). Following data collection, molecular structures are drawn using chemical drawing software (e.g., ChemDraw) and subjected to geometry optimization using molecular mechanics (MM+ force field) followed by semi-empirical methods (AM1 or PM3) until the root mean square gradient reaches ≤0.01 kcal/mol [5].

Molecular descriptors are then calculated using specialized software packages such as DRAGON, CODESSA, or HyperChem. These encompass constitutional, topological, geometrical, electrostatic, and quantum-chemical descriptors [11] [5]. Descriptor pre-processing eliminates constant or near-constant variables, followed by dataset division into training and test sets (typically 70-80% for training, 20-30% for testing) using random sampling or systematic approaches like Kennard-Stone algorithm.

Model Development Protocols

MLR Implementation: For MLR analysis, descriptor selection employs algorithms like Genetic Algorithm-PLS (GA-PLS) or heuristic method (HM) to identify the most relevant, minimally correlated descriptors. The heuristic approach implemented in CODESSA software begins with two-parameter correlations, progressively adding descriptors while monitoring statistical parameters (R², F-test, t-test, R²cv) until optimal model complexity is achieved [5]. This process yields a linear equation with specific coefficients for each descriptor.

PLS Implementation: PLS modeling utilizes the entire descriptor matrix after pre-processing (mean-centering and scaling). The critical step involves determining the optimal number of latent components through cross-validation, typically using leave-one-out (LOO) or leave-group-out (LGO) approaches. The optimal component count maximizes the cross-validated R² (Q²) while preventing overfitting. Implementation is facilitated by software packages like MATLAB, SIMCA, or R packages with specialized PLS modules [31].

Model Validation Procedures

Rigorous validation is essential for establishing model reliability. Internal validation employs LOO or LGO cross-validation to calculate Q². External validation uses the test set to determine predictive R² (R²pred). Additionally, Y-randomization tests (typically 100+ iterations) verify that models outperform chance correlations, with the resulting random models exhibiting significantly lower R² and Q² values [30]. Criteria such as those proposed by Golbraikh and Tropsha further validate predictive capability through parameters like |R₀² - R'₀²| < 0.3 and 0.85 ≤ k ≤ 1.15 [30].

Performance Comparison and Experimental Data

Quantitative Performance Metrics